Kubernetes Interview: Pods, Services and Deployments Explained

Master the three core Kubernetes building blocks — Pods, Services, and Deployments — with production-ready YAML manifests, networking internals, and common interview questions.

Kubernetes interview questions consistently focus on three foundational objects: Pods, Services, and Deployments. Understanding how they interact — from container scheduling to network routing to rolling updates — separates candidates who memorize definitions from those who can design production systems.

This guide walks through each primitive with production-grade YAML, explains the internals interviewers care about, and covers the exact questions that come up in DevOps and platform engineering interviews.

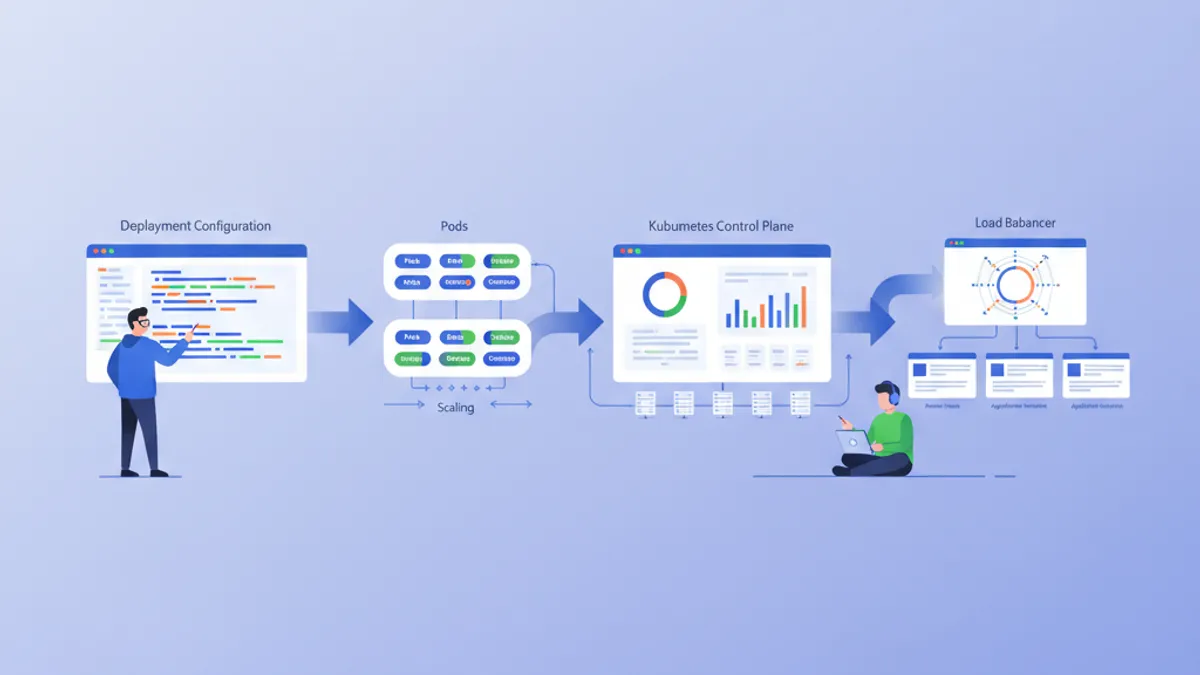

A Pod runs one or more containers on a single node. A Deployment manages Pod replicas and rollouts. A Service provides a stable network endpoint to reach those Pods. These three objects handle 80% of workload management in any Kubernetes cluster running v1.35 or later.

Understanding Kubernetes Pods: The Smallest Deployable Unit

A Pod is the atomic unit of scheduling in Kubernetes. It wraps one or more containers that share the same network namespace, IPC namespace, and storage volumes. Every container inside a Pod gets the same IP address and can communicate with sibling containers via localhost.

The single-container Pod is the most common pattern. Multi-container Pods exist for sidecar scenarios — log collectors, service meshes, or init containers that prepare the filesystem before the main process starts.

# api-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: api-server

labels:

app: api

version: v2

spec:

containers:

- name: api

image: registry.example.com/api:3.1.0

ports:

- containerPort: 8080

resources:

requests:

cpu: "250m" # 0.25 CPU core reserved

memory: "256Mi" # 256 MB reserved

limits:

cpu: "500m" # hard cap at 0.5 core

memory: "512Mi" # OOMKilled beyond this

readinessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 5

periodSeconds: 10

livenessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 15

periodSeconds: 20The resources block is critical in interviews. requests determine scheduling — the scheduler places the Pod on a node with enough available capacity. limits enforce runtime ceilings — exceeding the memory limit triggers an OOMKill, while exceeding the CPU limit causes throttling.

Setting memory limits without requests causes unpredictable scheduling. The kubelet may place the Pod on a node that cannot actually sustain the workload. Always set both requests and limits for production Pods.

Pod Lifecycle Phases and Restart Policies

Kubernetes tracks every Pod through five phases: Pending, Running, Succeeded, Failed, and Unknown. Interviewers often ask what triggers each transition.

| Phase | Meaning | Common Cause | |-------|---------|-------------| | Pending | Scheduled but not running | Image pull, insufficient resources | | Running | At least one container active | Normal operation | | Succeeded | All containers exited 0 | Batch jobs, init containers | | Failed | At least one container exited non-zero | Application crash, OOMKill | | Unknown | Node communication lost | Network partition, node failure |

The restartPolicy field controls what happens after a container exits. Always (the default for Deployments) restarts regardless of exit code. OnFailure restarts only on non-zero exits — useful for Jobs. Never lets the container stay dead — used for one-shot debug Pods.

# batch-job-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: data-migration

spec:

restartPolicy: OnFailure # restart only on crash

containers:

- name: migrate

image: registry.example.com/migrate:1.0.0

command: ["python", "migrate.py"]

env:

- name: DATABASE_URL

valueFrom:

secretKeyRef:

name: db-credentials

key: connection-stringThe valueFrom.secretKeyRef pattern keeps sensitive data out of the manifest. Kubernetes Secrets are base64-encoded by default — not encrypted. For production, enable encryption at rest via the EncryptionConfiguration API or use an external secret manager like HashiCorp Vault.

Kubernetes Deployments: Declarative Rollouts and Scaling

A Deployment creates and manages ReplicaSets, which in turn manage Pods. The Deployment controller watches the desired state and reconciles it with reality — adding Pods when scaling up, replacing them during rollouts, and rolling back when health checks fail.

Direct Pod creation is almost never appropriate in production. Deployments provide replica management, rolling updates, rollback history, and pause/resume capabilities.

# api-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: api-server

labels:

app: api

spec:

replicas: 3 # run 3 identical Pods

revisionHistoryLimit: 5 # keep 5 old ReplicaSets for rollback

selector:

matchLabels:

app: api # must match Pod template labels

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1 # at most 1 Pod down during update

maxSurge: 1 # at most 1 extra Pod during update

template:

metadata:

labels:

app: api

version: v2

spec:

containers:

- name: api

image: registry.example.com/api:3.1.0

ports:

- containerPort: 8080

resources:

requests:

cpu: "250m"

memory: "256Mi"

limits:

cpu: "500m"

memory: "512Mi"

readinessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 5

periodSeconds: 10The strategy.rollingUpdate section defines zero-downtime deployment behavior. With maxUnavailable: 1 and maxSurge: 1, Kubernetes creates one new Pod, waits for it to pass readiness checks, then terminates one old Pod. This cycle repeats until all replicas run the new version.

Rolling Back a Failed Kubernetes Deployment

Rollbacks are a high-frequency interview topic. The Deployment controller keeps old ReplicaSets around (controlled by revisionHistoryLimit) so rolling back is instant — no image rebuild needed.

# Check rollout status

kubectl rollout status deployment/api-server

# View revision history

kubectl rollout history deployment/api-server

# Roll back to previous version

kubectl rollout undo deployment/api-server

# Roll back to specific revision

kubectl rollout undo deployment/api-server --to-revision=3The undo command patches the Deployment spec to match the target revision's Pod template. The rolling update strategy then applies as normal, gradually replacing current Pods with the rollback version.

Ready to ace your DevOps interviews?

Practice with our interactive simulators, flashcards, and technical tests.

Kubernetes Services: Stable Networking for Dynamic Pods

Pods are ephemeral. They get new IP addresses every time they restart. A Service solves this by providing a stable virtual IP (ClusterIP) and DNS name that routes traffic to healthy Pods matching a label selector.

The kube-proxy component on each node programs iptables or IPVS rules to distribute traffic across backend Pods. When a Pod fails its readiness probe, the Endpoints controller removes it from the Service — no traffic reaches unhealthy instances.

# api-service.yaml

apiVersion: v1

kind: Service

metadata:

name: api-service

spec:

type: ClusterIP # internal-only by default

selector:

app: api # routes to Pods with this label

ports:

- name: http

port: 80 # Service port (what clients use)

targetPort: 8080 # Pod port (where container listens)

protocol: TCPOnce created, any Pod in the cluster can reach this Service at api-service.default.svc.cluster.local (or just api-service within the same namespace). CoreDNS handles the resolution.

Service Types: ClusterIP, NodePort, and LoadBalancer

Interviewers expect candidates to explain the three main Service types and when each applies.

| Type | Access Scope | Use Case |

|------|-------------|----------|

| ClusterIP | Cluster-internal only | Backend APIs, databases, caches |

| NodePort | External via <NodeIP>:<Port> | Development, bare-metal clusters |

| LoadBalancer | External via cloud LB | Production traffic on AWS/GCP/Azure |

# api-loadbalancer.yaml

apiVersion: v1

kind: Service

metadata:

name: api-public

annotations:

service.beta.kubernetes.io/aws-load-balancer-type: "nlb" # AWS NLB

spec:

type: LoadBalancer

selector:

app: api

ports:

- name: https

port: 443

targetPort: 8080

protocol: TCPThe LoadBalancer type triggers the cloud provider's load balancer controller to provision an external endpoint. On AWS, this creates an NLB or ALB depending on annotations. On bare-metal clusters without a cloud integration, MetalLB or kube-vip fill this role.

As of Kubernetes v1.35, the Ingress NGINX controller has been officially retired. The Gateway API is now the recommended approach for HTTP routing, TLS termination, and traffic splitting. New clusters should adopt Gateway API from the start.

Connecting Deployments to Services with Label Selectors

The link between a Deployment and a Service is the label selector — nothing more. There is no explicit reference between the two objects. The Service watches for Pods whose labels match its spec.selector and automatically maintains an Endpoints list.

This decoupled architecture means a single Service can route to Pods from multiple Deployments (useful for canary releases), and a Deployment can be exposed by multiple Services (different ports, different access levels).

# canary-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: api-server-canary

spec:

replicas: 1

selector:

matchLabels:

app: api # same label as main Deployment

template:

metadata:

labels:

app: api # Service picks this up automatically

version: v3-canary

spec:

containers:

- name: api

image: registry.example.com/api:3.2.0-rc1

ports:

- containerPort: 8080With the main Deployment running 3 replicas and the canary running 1, the Service distributes roughly 25% of traffic to the canary. No Service configuration change needed — label matching handles everything.

Horizontal Pod Autoscaling for Kubernetes Deployments

Static replica counts waste resources during low traffic and buckle under spikes. The HorizontalPodAutoscaler (HPA) adjusts replica count based on observed metrics.

# api-hpa.yaml

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: api-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: api-server

minReplicas: 2

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70 # scale up above 70% CPU

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 80

behavior:

scaleDown:

stabilizationWindowSeconds: 300 # wait 5 min before scaling downThe HPA polls the metrics server every 15 seconds by default. Kubernetes v1.35 introduced configurable HPA tolerance, replacing the hardcoded 10% threshold. This means teams running latency-sensitive workloads can now set tighter scaling triggers.

The behavior.scaleDown.stabilizationWindowSeconds prevents flapping — rapid scale-up/scale-down cycles caused by bursty traffic patterns.

Common Kubernetes Interview Questions and Answers

These questions appear repeatedly in DevOps and platform engineering interviews. Each answer targets the depth interviewers expect.

What happens when a Pod exceeds its memory limit? The kubelet sends a SIGKILL via the OOM killer. The container exits with code 137. The Deployment controller detects the failed Pod and creates a replacement, subject to the restart policy and any backoff delays.

How does Kubernetes route traffic to a specific Pod version during canary releases?

The Service selector matches on shared labels (e.g., app: api). Both the stable and canary Deployments use this label. Traffic distribution is proportional to the number of ready endpoints — 3 stable replicas + 1 canary replica means ~25% canary traffic. For precise traffic splitting (e.g., 5%), use Gateway API HTTPRoute with weighted backends.

What is the difference between readinessProbe and livenessProbe?

A readiness probe controls whether the Pod receives traffic from the Service. A failing readiness probe removes the Pod from the Endpoints list but does not restart it. A liveness probe controls whether the container gets restarted. A failing liveness probe triggers a container restart. Both can use HTTP, TCP, or exec checks.

How do rolling updates guarantee zero downtime?

The Deployment controller creates new Pods before terminating old ones, governed by maxSurge and maxUnavailable. New Pods must pass readiness probes before old Pods are drained. The terminationGracePeriodSeconds (default: 30s) gives in-flight requests time to complete before SIGKILL.

Preparing for a Kubernetes interview requires hands-on YAML practice, not just theory. The DevOps interview questions on SharpSkill cover these topics with interactive drills.

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Conclusion

- Pods are the scheduling unit — always set resource

requestsandlimits, and configure both readiness and liveness probes - Deployments manage replica count, rolling updates, and rollback history via ReplicaSets — never create bare Pods in production

- Services provide stable networking through label selectors and virtual IPs — ClusterIP for internal traffic, LoadBalancer for external

- The link between Deployments and Services is purely label-based, enabling canary patterns without configuration changes

- HPA scales Deployments based on CPU/memory metrics — use

stabilizationWindowSecondsto prevent flapping - Gateway API replaces Ingress NGINX as of Kubernetes v1.35 — new projects should adopt it from the start

- Practice with real YAML manifests and

kubectlcommands rather than memorizing definitions

Tags

Share

Related articles

Essential DevOps Interview Questions: Complete Guide 2026

Prepare for DevOps interviews with must-know questions on CI/CD, Kubernetes, Docker, Terraform, and SRE practices. Detailed answers included.

Kubernetes: Deploying Your First Application

Hands-on guide to deploying an application on Kubernetes. From minikube installation to Deployments, Services, and ConfigMaps with practical examples.

Terraform Interview Questions: Infrastructure as Code Complete Guide 2026

Master Terraform interview questions covering state management, modules, workspaces, providers, and IaC best practices. Updated for Terraform 1.14 and HCP Terraform in 2026.