2026년 Spring Boot 로깅: Logback과 JSON으로 구현하는 운영 환경 구조화 로그

Spring Boot 구조화 로깅 완벽 가이드입니다. Logback JSON 설정, 추적용 MDC, 운영 환경 모범 사례, ELK Stack 연동을 다룹니다.

전통적인 텍스트 로그는 운영 환경에서 빠르게 관리 불가능한 상태가 됩니다. 수백 개의 인스턴스가 초당 수천 줄의 로그를 생성하면 특정 오류를 찾는 일은 악몽이 됩니다. JSON 형식의 구조화 로그는 모든 이벤트를 쿼리 가능하고 자동으로 분석 가능하게 만들어 이러한 상황을 완전히 바꿉니다.

Spring Boot 3.4 이상은 외부 의존성 없이 구조화된 JSON 로깅을 네이티브로 지원합니다. 이전 버전에서는 Logback Logstash Encoder가 여전히 표준 솔루션입니다.

구조화 로그를 도입하는 이유

전통적인 텍스트 로그의 한계

전형적인 텍스트 로그는 다음과 같은 모습입니다.

2026-03-27 10:15:32.456 INFO [order-service,abc123] c.e.s.OrderService - Order created for user john@example.com, amount: 150.00€, items: 3이 형식은 운영 환경에서 여러 문제를 발생시킵니다. 특정 정보를 추출하려면 복잡하고 깨지기 쉬운 정규 표현식이 필요합니다. 서비스 간 상관관계 분석에는 엄격한 규약이 필요한데 팀마다 해석이 달라집니다. Elasticsearch 같은 분석 도구는 이러한 비구조화 문자열을 효율적으로 인덱싱하기 어렵습니다.

JSON 형식의 장점

같은 이벤트를 JSON으로 표현하면 즉시 활용할 수 있습니다.

{

"@timestamp": "2026-03-27T10:15:32.456Z",

"level": "INFO",

"logger": "com.example.service.OrderService",

"message": "Order created",

"service": "order-service",

"traceId": "abc123",

"userId": "john@example.com",

"orderId": "ORD-789456",

"amount": 150.00,

"currency": "EUR",

"itemCount": 3

}모든 필드를 필터링하고 집계할 수 있게 됩니다. Elasticsearch 쿼리로 최근 15분 동안 100유로를 초과하는 주문 전체를 즉시 찾을 수 있습니다. Kibana 대시보드는 수동 파싱 없이 트렌드를 시각화합니다.

Spring Boot 3.4 이상의 네이티브 설정

구조화 JSON 로그 활성화

Spring Boot 3.4는 logging.structured 속성을 통해 구조화 로깅에 대한 네이티브 지원을 도입했습니다. 이 방식은 추가 의존성을 전혀 필요로 하지 않습니다.

# application.yml

# Native structured logging configuration for Spring Boot 3.4+

logging:

structured:

# Output format: ecs (Elastic), logstash, gelf

format:

console: ecs

file: ecs

file:

name: /var/log/app/application.log

level:

root: INFO

com.example: DEBUGECS(Elastic Common Schema) 형식은 추가 설정 없이 Elasticsearch와 Kibana와의 직접적인 호환성을 보장합니다.

JSON 필드 커스터마이징

각 로그에 비즈니스 필드를 추가하기 위해 Spring Boot는 추가 속성 설정을 허용합니다.

# application.yml

# Custom fields in structured logs

logging:

structured:

format:

console: ecs

ecs:

# Service information added to every log

service:

name: ${spring.application.name}

version: ${app.version:1.0.0}

environment: ${spring.profiles.active:default}

node-name: ${HOSTNAME:unknown}// Programmatic configuration for additional fields

package com.example.logging.config;

import org.springframework.boot.logging.structured.StructuredLogFormatterCustomizer;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class LoggingConfig {

@Bean

StructuredLogFormatterCustomizer<EcsStructuredLogFormatter> ecsCustomizer() {

return formatter -> formatter

// Adds static fields to all logs

.addStaticField("team", "backend")

.addStaticField("region", System.getenv("AWS_REGION"))

// Customizes exception formatting

.setIncludeStacktrace(true)

.setStacktraceMaxLength(5000);

}

}이 필드들은 모든 로그 라인에 표시되어 대시보드에서 팀이나 리전별로 필터링하기 쉽게 만듭니다.

JSON 인코더를 사용한 클래식 Logback 설정

Logstash Encoder 의존성

Spring Boot 3.4 미만 버전이거나 고급 커스터마이징이 필요한 경우, Logstash Logback Encoder가 여전히 표준 솔루션으로 남아 있습니다.

<!-- pom.xml -->

<!-- Dependency for JSON logging with Logback -->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>7.4</version>

</dependency>전체 Logback 설정

logback-spring.xml 파일은 출력 형식에 대한 완전한 제어를 제공합니다.

<!-- src/main/resources/logback-spring.xml -->

<!-- Logback configuration for structured JSON logs -->

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<!-- Spring Boot properties -->

<springProperty scope="context" name="appName" source="spring.application.name" defaultValue="app"/>

<springProperty scope="context" name="appVersion" source="app.version" defaultValue="1.0.0"/>

<!-- JSON console appender for production -->

<appender name="JSON_CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<!-- Custom fields added to every log -->

<customFields>{"service":"${appName}","version":"${appVersion}"}</customFields>

<!-- Includes MDC (tracing context) -->

<includeMdcKeyName>traceId</includeMdcKeyName>

<includeMdcKeyName>spanId</includeMdcKeyName>

<includeMdcKeyName>userId</includeMdcKeyName>

<includeMdcKeyName>requestId</includeMdcKeyName>

<!-- ISO8601 timestamp format -->

<timestampPattern>yyyy-MM-dd'T'HH:mm:ss.SSSZ</timestampPattern>

<!-- Complete stack traces -->

<throwableConverter class="net.logstash.logback.stacktrace.ShortenedThrowableConverter">

<maxDepthPerThrowable>30</maxDepthPerThrowable>

<maxLength>4096</maxLength>

<shortenedClassNameLength>36</shortenedClassNameLength>

<rootCauseFirst>true</rootCauseFirst>

</throwableConverter>

</encoder>

</appender>

<!-- Rolling JSON file appender -->

<appender name="JSON_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<file>/var/log/${appName}/application.json</file>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>/var/log/${appName}/application.%d{yyyy-MM-dd}.%i.json.gz</fileNamePattern>

<maxHistory>30</maxHistory>

<maxFileSize>100MB</maxFileSize>

<totalSizeCap>3GB</totalSizeCap>

</rollingPolicy>

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<customFields>{"service":"${appName}","version":"${appVersion}"}</customFields>

</encoder>

</appender>

<!-- Text appender for development -->

<appender name="TEXT_CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>%d{HH:mm:ss.SSS} %highlight(%-5level) [%thread] %cyan(%logger{36}) - %msg%n</pattern>

</encoder>

</appender>

<!-- Activation by Spring profile -->

<springProfile name="prod,staging">

<root level="INFO">

<appender-ref ref="JSON_CONSOLE"/>

<appender-ref ref="JSON_FILE"/>

</root>

</springProfile>

<springProfile name="dev,local">

<root level="DEBUG">

<appender-ref ref="TEXT_CONSOLE"/>

</root>

</springProfile>

</configuration>이 설정은 운영 환경에서만 JSON 로그를 활성화하고 개발 환경에서는 가독성 좋은 로그를 유지합니다.

<springProfile>을 사용하면 설정을 변경하지 않고도 환경에 따라 텍스트 형식과 JSON 형식 사이를 자동으로 전환할 수 있습니다.

분산 추적을 위한 MDC

추적 컨텍스트 전파

MDC(Mapped Diagnostic Context)는 요청이나 추적 식별자 같은 컨텍스트 정보를 모든 로그에 추가합니다.

// Filter for automatic trace context injection

package com.example.logging.filter;

import jakarta.servlet.FilterChain;

import jakarta.servlet.ServletException;

import jakarta.servlet.http.HttpServletRequest;

import jakarta.servlet.http.HttpServletResponse;

import org.slf4j.MDC;

import org.springframework.core.Ordered;

import org.springframework.core.annotation.Order;

import org.springframework.stereotype.Component;

import org.springframework.web.filter.OncePerRequestFilter;

import java.io.IOException;

import java.util.UUID;

@Component

@Order(Ordered.HIGHEST_PRECEDENCE)

public class TracingFilter extends OncePerRequestFilter {

// Standard MDC keys for tracing

private static final String TRACE_ID_KEY = "traceId";

private static final String SPAN_ID_KEY = "spanId";

private static final String REQUEST_ID_KEY = "requestId";

private static final String USER_ID_KEY = "userId";

@Override

protected void doFilterInternal(

HttpServletRequest request,

HttpServletResponse response,

FilterChain filterChain) throws ServletException, IOException {

try {

// Retrieve or generate trace identifiers

String traceId = extractOrGenerate(request, "X-Trace-Id", TRACE_ID_KEY);

String spanId = generateSpanId();

String requestId = extractOrGenerate(request, "X-Request-Id", REQUEST_ID_KEY);

String userId = request.getHeader("X-User-Id");

// Inject into MDC to appear in all logs

MDC.put(TRACE_ID_KEY, traceId);

MDC.put(SPAN_ID_KEY, spanId);

MDC.put(REQUEST_ID_KEY, requestId);

if (userId != null) {

MDC.put(USER_ID_KEY, userId);

}

// Propagate to responses for inter-service chaining

response.setHeader("X-Trace-Id", traceId);

response.setHeader("X-Request-Id", requestId);

filterChain.doFilter(request, response);

} finally {

// Clean MDC after each request

MDC.clear();

}

}

private String extractOrGenerate(HttpServletRequest request, String header, String key) {

String value = request.getHeader(header);

return value != null ? value : UUID.randomUUID().toString().replace("-", "").substring(0, 16);

}

private String generateSpanId() {

return UUID.randomUUID().toString().replace("-", "").substring(0, 8);

}

}요청 처리 도중 발생하는 모든 로그에는 이 식별자들이 자동으로 포함됩니다.

비즈니스 코드에서 MDC 사용

// Business service with enriched contextual logging

package com.example.service;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import org.springframework.stereotype.Service;

@Service

public class OrderService {

private static final Logger log = LoggerFactory.getLogger(OrderService.class);

public Order createOrder(CreateOrderRequest request) {

// Add business information to MDC context

MDC.put("orderId", request.getOrderId());

MDC.put("customerId", request.getCustomerId());

try {

log.info("Creating order with {} items", request.getItems().size());

// Business logic...

Order order = processOrder(request);

log.info("Order created successfully, total: {} {}",

order.getTotal(), order.getCurrency());

return order;

} catch (Exception e) {

// Exception appears with full MDC context

log.error("Failed to create order", e);

throw e;

} finally {

// Clean business keys added

MDC.remove("orderId");

MDC.remove("customerId");

}

}

}생성된 JSON 로그에는 디버깅에 필요한 모든 정보가 포함됩니다.

{

"@timestamp": "2026-03-27T10:15:32.456Z",

"level": "INFO",

"logger": "com.example.service.OrderService",

"message": "Order created successfully, total: 150.00 EUR",

"traceId": "a1b2c3d4e5f67890",

"spanId": "12345678",

"requestId": "req-abc-123",

"userId": "user-456",

"orderId": "ORD-789",

"customerId": "CUST-321"

}Spring Boot 면접 준비가 되셨나요?

인터랙티브 시뮬레이터, flashcards, 기술 테스트로 연습하세요.

성능을 위한 비동기 로깅

스레드 풀 설정

운영 환경에서 동기적인 로그 쓰기는 요청 지연 시간에 영향을 줍니다. 비동기 어펜더는 메인 스레드와 로깅을 분리합니다.

<!-- logback-spring.xml -->

<!-- High-performance asynchronous appender configuration -->

<appender name="ASYNC_JSON" class="ch.qos.logback.classic.AsyncAppender">

<!-- Pending log buffer size -->

<queueSize>1024</queueSize>

<!-- Never block the calling thread -->

<neverBlock>true</neverBlock>

<!-- Threshold before dropping DEBUG/TRACE logs -->

<discardingThreshold>20</discardingThreshold>

<!-- Include caller information (expensive) -->

<includeCallerData>false</includeCallerData>

<!-- Actual appender for writing -->

<appender-ref ref="JSON_FILE"/>

</appender>

<springProfile name="prod">

<root level="INFO">

<appender-ref ref="ASYNC_JSON"/>

</root>

</springProfile>로깅 시스템 메트릭

로깅 시스템 자체를 모니터링하면 조용한 로그 손실을 방지할 수 있습니다.

// Exposing Logback metrics via Micrometer

package com.example.logging.metrics;

import ch.qos.logback.classic.Logger;

import ch.qos.logback.classic.LoggerContext;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.Appender;

import ch.qos.logback.classic.AsyncAppender;

import io.micrometer.core.instrument.Gauge;

import io.micrometer.core.instrument.MeterRegistry;

import org.slf4j.LoggerFactory;

import org.springframework.stereotype.Component;

import jakarta.annotation.PostConstruct;

import java.util.Iterator;

@Component

public class LoggingMetrics {

private final MeterRegistry registry;

public LoggingMetrics(MeterRegistry registry) {

this.registry = registry;

}

@PostConstruct

void registerMetrics() {

LoggerContext context = (LoggerContext) LoggerFactory.getILoggerFactory();

Logger rootLogger = context.getLogger(Logger.ROOT_LOGGER_NAME);

// Iterate through appenders to find AsyncAppenders

Iterator<Appender<ILoggingEvent>> it = rootLogger.iteratorForAppenders();

while (it.hasNext()) {

Appender<ILoggingEvent> appender = it.next();

if (appender instanceof AsyncAppender asyncAppender) {

registerAsyncMetrics(asyncAppender);

}

}

}

private void registerAsyncMetrics(AsyncAppender appender) {

String appenderName = appender.getName();

// Current queue size

Gauge.builder("logback.async.queue.size", appender, AsyncAppender::getQueueSize)

.tag("appender", appenderName)

.description("Current async appender queue size")

.register(registry);

// Remaining capacity

Gauge.builder("logback.async.queue.remaining", appender, AsyncAppender::getRemainingCapacity)

.tag("appender", appenderName)

.description("Remaining capacity in async queue")

.register(registry);

// Number of dropped logs

Gauge.builder("logback.async.discarded", appender, AsyncAppender::getNumberOfElementsInQueue)

.tag("appender", appenderName)

.description("Number of discarded log events")

.register(registry);

}

}logback.async.queue.remaining < 100에 대한 Prometheus 알람은 로그 손실 위험을 사전에 알려줍니다.

ELK Stack 연동

Filebeat 설정

Filebeat는 JSON 파일을 수집해 변환 없이 Elasticsearch로 전송합니다.

# filebeat.yml

# Filebeat configuration for Spring Boot JSON logs

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/*/application.json

# Automatic JSON parsing

json:

keys_under_root: true

overwrite_keys: true

add_error_key: true

message_key: message

processors:

# Add Kubernetes metadata if available

- add_kubernetes_metadata:

host: ${NODE_NAME}

matchers:

- logs_path:

logs_path: "/var/log/containers/"

# Parse timestamp

- timestamp:

field: "@timestamp"

layouts:

- '2006-01-02T15:04:05.000Z'

- '2006-01-02T15:04:05.000-07:00'

test:

- '2026-03-27T10:15:32.456Z'

output.elasticsearch:

hosts: ["elasticsearch:9200"]

index: "logs-%{[service]}-%{+yyyy.MM.dd}"

pipeline: "spring-boot-logs"

setup.template:

name: "logs"

pattern: "logs-*"데이터 보강을 위한 Elasticsearch 파이프라인

// PUT _ingest/pipeline/spring-boot-logs

{

"description": "Spring Boot logs enrichment",

"processors": [

{

"geoip": {

"field": "client.ip",

"target_field": "client.geo",

"ignore_missing": true

}

},

{

"user_agent": {

"field": "user_agent.original",

"target_field": "user_agent",

"ignore_missing": true

}

},

{

"set": {

"field": "event.ingested",

"value": "{{_ingest.timestamp}}"

}

},

{

"script": {

"description": "Classify log level severity",

"source": """

def level = ctx.level;

if (level == 'ERROR') ctx.severity = 4;

else if (level == 'WARN') ctx.severity = 3;

else if (level == 'INFO') ctx.severity = 2;

else ctx.severity = 1;

"""

}

}

]

}운영 환경 모범 사례

체계적으로 포함해야 할 정보

각 로그에는 디버깅과 상관관계 분석을 위한 최소 정보가 포함되어야 합니다.

// Helper for consistent structured logs

package com.example.logging;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import java.util.Map;

import java.util.function.Supplier;

public final class StructuredLogger {

private final Logger delegate;

private StructuredLogger(Class<?> clazz) {

this.delegate = LoggerFactory.getLogger(clazz);

}

public static StructuredLogger getLogger(Class<?> clazz) {

return new StructuredLogger(clazz);

}

// Log with temporary business context

public void info(String message, Map<String, String> context) {

try {

context.forEach(MDC::put);

delegate.info(message);

} finally {

context.keySet().forEach(MDC::remove);

}

}

// Log with supplier for lazy evaluation

public void debug(Supplier<String> messageSupplier, Map<String, String> context) {

if (delegate.isDebugEnabled()) {

try {

context.forEach(MDC::put);

delegate.debug(messageSupplier.get());

} finally {

context.keySet().forEach(MDC::remove);

}

}

}

// Error log with full context

public void error(String message, Throwable t, Map<String, String> context) {

try {

context.forEach(MDC::put);

delegate.error(message, t);

} finally {

context.keySet().forEach(MDC::remove);

}

}

}// Usage in business code

private static final StructuredLogger log = StructuredLogger.getLogger(PaymentService.class);

public void processPayment(Payment payment) {

log.info("Processing payment", Map.of(

"paymentId", payment.getId(),

"amount", String.valueOf(payment.getAmount()),

"currency", payment.getCurrency(),

"method", payment.getMethod().name()

));

}제외해야 할 민감 정보

로그에는 절대로 개인 정보나 민감 데이터를 포함해서는 안 됩니다.

// Sensitive data masking filter

package com.example.logging.filter;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.filter.Filter;

import ch.qos.logback.core.spi.FilterReply;

import java.util.regex.Pattern;

public class SensitiveDataFilter extends Filter<ILoggingEvent> {

// Sensitive data patterns to mask

private static final Pattern EMAIL_PATTERN =

Pattern.compile("[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\\.[a-zA-Z]{2,}");

private static final Pattern CREDIT_CARD_PATTERN =

Pattern.compile("\\b\\d{4}[- ]?\\d{4}[- ]?\\d{4}[- ]?\\d{4}\\b");

private static final Pattern PASSWORD_PATTERN =

Pattern.compile("(?i)(password|pwd|secret|token)[\"']?\\s*[:=]\\s*[\"']?[^\\s,}\"']+");

private static final Pattern PHONE_PATTERN =

Pattern.compile("\\+?\\d{1,3}[- ]?\\d{6,14}");

@Override

public FilterReply decide(ILoggingEvent event) {

// Accept all logs but modify the message

// Note: for real masking, use a custom converter

return FilterReply.NEUTRAL;

}

// Utility method to mask data

public static String maskSensitiveData(String input) {

if (input == null) return null;

String result = input;

result = EMAIL_PATTERN.matcher(result).replaceAll("[EMAIL_MASKED]");

result = CREDIT_CARD_PATTERN.matcher(result).replaceAll("[CARD_MASKED]");

result = PASSWORD_PATTERN.matcher(result).replaceAll("$1=[REDACTED]");

result = PHONE_PATTERN.matcher(result).replaceAll("[PHONE_MASKED]");

return result;

}

}개인 정보를 포함한 로그는 GDPR이나 한국 개인정보보호법의 적용을 받습니다. IP 주소, 이메일, 사용자 식별자에는 보존 정책과 필요한 경우 동의 절차가 있어야 합니다.

적절한 로그 레벨

// Appropriate log level guidelines

package com.example.logging;

public class LogLevelGuidelines {

// ERROR: Failure requiring intervention

// - Unrecoverable exceptions

// - Critical transaction failures

// - External service unavailability

log.error("Payment gateway unreachable after 3 retries", exception);

// WARN: Abnormal but handled situation

// - Retry in progress

// - Performance degradation

// - Resources near limits

log.warn("Database connection pool at 85% capacity");

// INFO: Significant business events

// - Transaction start/end

// - Important state changes

// - Key user actions

log.info("Order {} shipped to customer {}", orderId, customerId);

// DEBUG: Diagnostic information

// - Execution details

// - Important variable values

// - Branching decisions

log.debug("Cache miss for key {}, fetching from database", cacheKey);

// TRACE: Very fine details

// - Method entry/exit

// - Complete object contents

// - Loops and iterations

log.trace("Processing item {} of {}", index, total);

}로그 테스트와 검증

JSON 구조에 대한 단위 테스트

// Structured log validation tests

package com.example.logging;

import ch.qos.logback.classic.Logger;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.read.ListAppender;

import com.fasterxml.jackson.databind.JsonNode;

import com.fasterxml.jackson.databind.ObjectMapper;

import org.junit.jupiter.api.BeforeEach;

import org.junit.jupiter.api.Test;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import static org.assertj.core.api.Assertions.assertThat;

class StructuredLoggingTest {

private ListAppender<ILoggingEvent> listAppender;

private Logger logger;

private ObjectMapper objectMapper;

@BeforeEach

void setUp() {

logger = (Logger) LoggerFactory.getLogger(StructuredLoggingTest.class);

listAppender = new ListAppender<>();

listAppender.start();

logger.addAppender(listAppender);

objectMapper = new ObjectMapper();

}

@Test

void shouldIncludeMdcFieldsInLog() {

// Given

MDC.put("traceId", "test-trace-123");

MDC.put("userId", "user-456");

// When

logger.info("Test message with MDC context");

// Then

ILoggingEvent event = listAppender.list.get(0);

assertThat(event.getMDCPropertyMap())

.containsEntry("traceId", "test-trace-123")

.containsEntry("userId", "user-456");

MDC.clear();

}

@Test

void shouldLogExceptionWithStackTrace() {

// Given

Exception testException = new RuntimeException("Test error");

// When

logger.error("Operation failed", testException);

// Then

ILoggingEvent event = listAppender.list.get(0);

assertThat(event.getThrowableProxy()).isNotNull();

assertThat(event.getThrowableProxy().getMessage()).isEqualTo("Test error");

}

}결론

구조화된 JSON 로그는 Spring Boot 애플리케이션의 옵저버빌리티를 변화시킵니다.

✅ 쿼리 가능: 모든 필드를 Elasticsearch나 CloudWatch에서 필터링할 수 있습니다

✅ 상관 분석 가능: MDC가 추적 식별자를 서비스 간에 전파합니다

✅ 고성능: 비동기 어펜더가 로깅과 처리를 분리합니다

✅ 안전: 민감 데이터 마스킹으로 GDPR 준수를 보장합니다

✅ 통합: ELK Stack, Datadog, Splunk와 네이티브 호환성

✅ 알림 가능: 구조화된 필드로 정확한 알림 규칙을 만들 수 있습니다

✅ 유지보수 용이: JSON 형식이 깨지기 쉬운 파싱 정규식을 제거합니다

이 접근 방식은 메트릭(Micrometer)과 분산 추적(OpenTelemetry)과 함께 현대 옵저버빌리티의 토대를 형성합니다.

연습을 시작하세요!

면접 시뮬레이터와 기술 테스트로 지식을 테스트하세요.

태그

공유

관련 기사

Spring Boot Actuator: Micrometer와 Prometheus로 구현하는 운영 모니터링

운영 모니터링을 위한 Spring Boot Actuator 완전 가이드입니다. Micrometer 설정, Prometheus 지표, 커스텀 엔드포인트, 알림 구성을 다룹니다.

Spring Kafka: 회복탄력성을 갖춘 컨슈머로 구축하는 이벤트 기반 아키텍처

이벤트 기반 아키텍처를 위한 완전한 Spring Kafka 가이드. 설정, 회복탄력성을 갖춘 컨슈머, 재시도 정책, Dead Letter Queue, 분산 애플리케이션을 위한 운영 패턴.

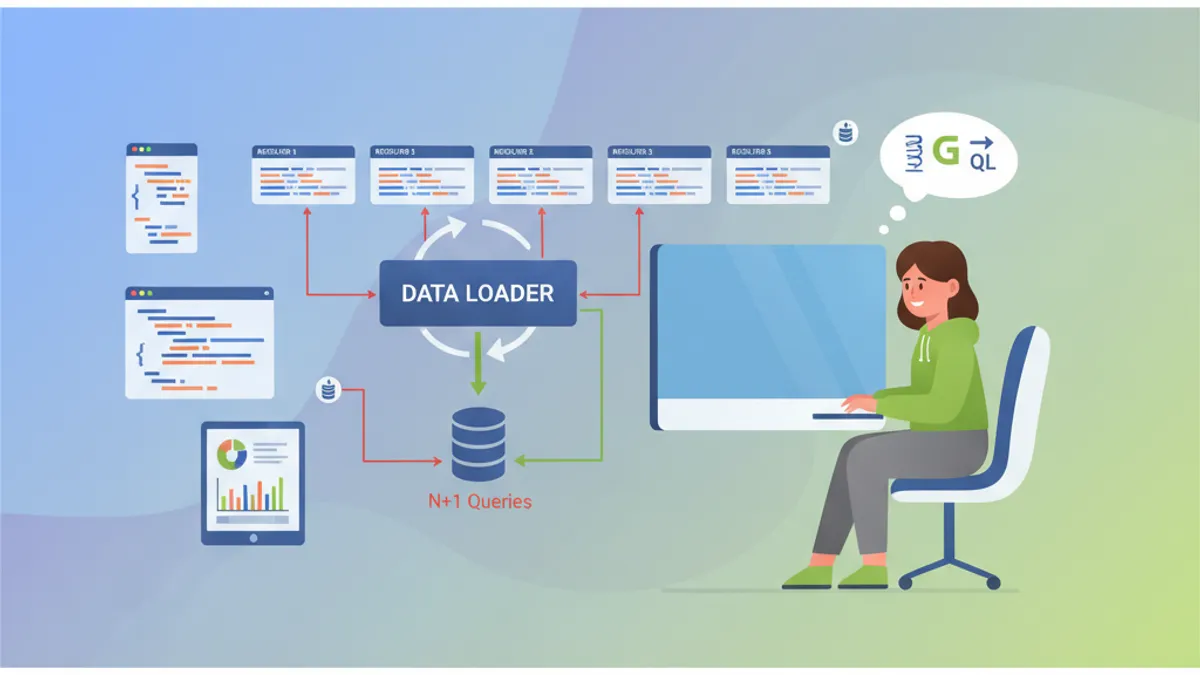

Spring GraphQL 면접: Resolver, DataLoader 및 N+1 문제 해결책

이 완전한 가이드로 Spring GraphQL 면접을 준비합니다. Resolver, DataLoader, N+1 문제 처리, mutation 및 기술 질문을 위한 모범 사례를 다룹니다.