Spring Boot Logging in 2026: Structured Logs for Production with Logback and JSON

Complete guide to Spring Boot structured logging. Logback JSON configuration, MDC for tracing, production best practices and ELK Stack integration.

Traditional text-based logs quickly become unmanageable in production. With hundreds of instances generating thousands of lines per second, searching for a specific error becomes a nightmare. Structured JSON logs transform this situation by making every event queryable and automatically analyzable.

Spring Boot 3.4+ natively supports structured JSON logging without external dependencies. For earlier versions, Logback Logstash Encoder remains the reference solution.

Why Adopt Structured Logging

Limitations of Traditional Text Logs

A typical text log looks like this:

2026-03-27 10:15:32.456 INFO [order-service,abc123] c.e.s.OrderService - Order created for user john@example.com, amount: 150.00€, items: 3This format poses several problems in production. Extracting specific information requires complex and fragile regex patterns. Cross-service correlation requires strict conventions that each team interprets differently. Analysis tools like Elasticsearch struggle to efficiently index these unstructured strings.

Benefits of JSON Format

The same event in JSON becomes immediately exploitable:

{

"@timestamp": "2026-03-27T10:15:32.456Z",

"level": "INFO",

"logger": "com.example.service.OrderService",

"message": "Order created",

"service": "order-service",

"traceId": "abc123",

"userId": "john@example.com",

"orderId": "ORD-789456",

"amount": 150.00,

"currency": "EUR",

"itemCount": 3

}Every field becomes filterable and aggregable. An Elasticsearch query can instantly find all orders over 100€ from the last fifteen minutes. Kibana dashboards visualize trends without manual parsing.

Native Spring Boot 3.4+ Configuration

Enabling Structured JSON Logs

Spring Boot 3.4 introduces native structured logging support via the logging.structured property. This approach requires no additional dependencies.

# application.yml

# Native structured logging configuration for Spring Boot 3.4+

logging:

structured:

# Output format: ecs (Elastic), logstash, gelf

format:

console: ecs

file: ecs

file:

name: /var/log/app/application.log

level:

root: INFO

com.example: DEBUGThe ECS (Elastic Common Schema) format guarantees direct compatibility with Elasticsearch and Kibana without additional configuration.

Customizing JSON Fields

To add business fields to every log, Spring Boot allows configuring additional attributes.

# application.yml

# Custom fields in structured logs

logging:

structured:

format:

console: ecs

ecs:

# Service information added to every log

service:

name: ${spring.application.name}

version: ${app.version:1.0.0}

environment: ${spring.profiles.active:default}

node-name: ${HOSTNAME:unknown}// Programmatic configuration for additional fields

package com.example.logging.config;

import org.springframework.boot.logging.structured.StructuredLogFormatterCustomizer;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class LoggingConfig {

@Bean

StructuredLogFormatterCustomizer<EcsStructuredLogFormatter> ecsCustomizer() {

return formatter -> formatter

// Adds static fields to all logs

.addStaticField("team", "backend")

.addStaticField("region", System.getenv("AWS_REGION"))

// Customizes exception formatting

.setIncludeStacktrace(true)

.setStacktraceMaxLength(5000);

}

}These fields appear in every log line, facilitating filtering by team or region in dashboards.

Classic Logback Configuration with JSON Encoder

Logstash Encoder Dependency

For Spring Boot versions before 3.4 or advanced customization needs, Logstash Logback Encoder remains the reference solution.

<!-- pom.xml -->

<!-- Dependency for JSON logging with Logback -->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>7.4</version>

</dependency>Complete Logback Configuration

The logback-spring.xml file offers total control over output format.

<!-- src/main/resources/logback-spring.xml -->

<!-- Logback configuration for structured JSON logs -->

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<!-- Spring Boot properties -->

<springProperty scope="context" name="appName" source="spring.application.name" defaultValue="app"/>

<springProperty scope="context" name="appVersion" source="app.version" defaultValue="1.0.0"/>

<!-- JSON console appender for production -->

<appender name="JSON_CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<!-- Custom fields added to every log -->

<customFields>{"service":"${appName}","version":"${appVersion}"}</customFields>

<!-- Includes MDC (tracing context) -->

<includeMdcKeyName>traceId</includeMdcKeyName>

<includeMdcKeyName>spanId</includeMdcKeyName>

<includeMdcKeyName>userId</includeMdcKeyName>

<includeMdcKeyName>requestId</includeMdcKeyName>

<!-- ISO8601 timestamp format -->

<timestampPattern>yyyy-MM-dd'T'HH:mm:ss.SSSZ</timestampPattern>

<!-- Complete stack traces -->

<throwableConverter class="net.logstash.logback.stacktrace.ShortenedThrowableConverter">

<maxDepthPerThrowable>30</maxDepthPerThrowable>

<maxLength>4096</maxLength>

<shortenedClassNameLength>36</shortenedClassNameLength>

<rootCauseFirst>true</rootCauseFirst>

</throwableConverter>

</encoder>

</appender>

<!-- Rolling JSON file appender -->

<appender name="JSON_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender">

<file>/var/log/${appName}/application.json</file>

<rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy">

<fileNamePattern>/var/log/${appName}/application.%d{yyyy-MM-dd}.%i.json.gz</fileNamePattern>

<maxHistory>30</maxHistory>

<maxFileSize>100MB</maxFileSize>

<totalSizeCap>3GB</totalSizeCap>

</rollingPolicy>

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<customFields>{"service":"${appName}","version":"${appVersion}"}</customFields>

</encoder>

</appender>

<!-- Text appender for development -->

<appender name="TEXT_CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>%d{HH:mm:ss.SSS} %highlight(%-5level) [%thread] %cyan(%logger{36}) - %msg%n</pattern>

</encoder>

</appender>

<!-- Activation by Spring profile -->

<springProfile name="prod,staging">

<root level="INFO">

<appender-ref ref="JSON_CONSOLE"/>

<appender-ref ref="JSON_FILE"/>

</root>

</springProfile>

<springProfile name="dev,local">

<root level="DEBUG">

<appender-ref ref="TEXT_CONSOLE"/>

</root>

</springProfile>

</configuration>This configuration activates JSON logs only in production while preserving readable logs in development.

Using <springProfile> allows automatic switching between text and JSON formats based on the environment, without modifying the configuration.

MDC for Distributed Tracing

Trace Context Propagation

MDC (Mapped Diagnostic Context) enriches every log with context information like request or trace identifiers.

// Filter for automatic trace context injection

package com.example.logging.filter;

import jakarta.servlet.FilterChain;

import jakarta.servlet.ServletException;

import jakarta.servlet.http.HttpServletRequest;

import jakarta.servlet.http.HttpServletResponse;

import org.slf4j.MDC;

import org.springframework.core.Ordered;

import org.springframework.core.annotation.Order;

import org.springframework.stereotype.Component;

import org.springframework.web.filter.OncePerRequestFilter;

import java.io.IOException;

import java.util.UUID;

@Component

@Order(Ordered.HIGHEST_PRECEDENCE)

public class TracingFilter extends OncePerRequestFilter {

// Standard MDC keys for tracing

private static final String TRACE_ID_KEY = "traceId";

private static final String SPAN_ID_KEY = "spanId";

private static final String REQUEST_ID_KEY = "requestId";

private static final String USER_ID_KEY = "userId";

@Override

protected void doFilterInternal(

HttpServletRequest request,

HttpServletResponse response,

FilterChain filterChain) throws ServletException, IOException {

try {

// Retrieve or generate trace identifiers

String traceId = extractOrGenerate(request, "X-Trace-Id", TRACE_ID_KEY);

String spanId = generateSpanId();

String requestId = extractOrGenerate(request, "X-Request-Id", REQUEST_ID_KEY);

String userId = request.getHeader("X-User-Id");

// Inject into MDC to appear in all logs

MDC.put(TRACE_ID_KEY, traceId);

MDC.put(SPAN_ID_KEY, spanId);

MDC.put(REQUEST_ID_KEY, requestId);

if (userId != null) {

MDC.put(USER_ID_KEY, userId);

}

// Propagate to responses for inter-service chaining

response.setHeader("X-Trace-Id", traceId);

response.setHeader("X-Request-Id", requestId);

filterChain.doFilter(request, response);

} finally {

// Clean MDC after each request

MDC.clear();

}

}

private String extractOrGenerate(HttpServletRequest request, String header, String key) {

String value = request.getHeader(header);

return value != null ? value : UUID.randomUUID().toString().replace("-", "").substring(0, 16);

}

private String generateSpanId() {

return UUID.randomUUID().toString().replace("-", "").substring(0, 8);

}

}Every log emitted during request processing will automatically contain these identifiers.

Using MDC in Business Code

// Business service with enriched contextual logging

package com.example.service;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import org.springframework.stereotype.Service;

@Service

public class OrderService {

private static final Logger log = LoggerFactory.getLogger(OrderService.class);

public Order createOrder(CreateOrderRequest request) {

// Add business information to MDC context

MDC.put("orderId", request.getOrderId());

MDC.put("customerId", request.getCustomerId());

try {

log.info("Creating order with {} items", request.getItems().size());

// Business logic...

Order order = processOrder(request);

log.info("Order created successfully, total: {} {}",

order.getTotal(), order.getCurrency());

return order;

} catch (Exception e) {

// Exception appears with full MDC context

log.error("Failed to create order", e);

throw e;

} finally {

// Clean business keys added

MDC.remove("orderId");

MDC.remove("customerId");

}

}

}The resulting JSON log contains all necessary information for debugging:

{

"@timestamp": "2026-03-27T10:15:32.456Z",

"level": "INFO",

"logger": "com.example.service.OrderService",

"message": "Order created successfully, total: 150.00 EUR",

"traceId": "a1b2c3d4e5f67890",

"spanId": "12345678",

"requestId": "req-abc-123",

"userId": "user-456",

"orderId": "ORD-789",

"customerId": "CUST-321"

}Ready to ace your Spring Boot interviews?

Practice with our interactive simulators, flashcards, and technical tests.

Asynchronous Logging for Performance

Thread Pool Configuration

In production, synchronous log writes impact request latency. The asynchronous appender decouples logging from the main thread.

<!-- logback-spring.xml -->

<!-- High-performance asynchronous appender configuration -->

<appender name="ASYNC_JSON" class="ch.qos.logback.classic.AsyncAppender">

<!-- Pending log buffer size -->

<queueSize>1024</queueSize>

<!-- Never block the calling thread -->

<neverBlock>true</neverBlock>

<!-- Threshold before dropping DEBUG/TRACE logs -->

<discardingThreshold>20</discardingThreshold>

<!-- Include caller information (expensive) -->

<includeCallerData>false</includeCallerData>

<!-- Actual appender for writing -->

<appender-ref ref="JSON_FILE"/>

</appender>

<springProfile name="prod">

<root level="INFO">

<appender-ref ref="ASYNC_JSON"/>

</root>

</springProfile>Logging System Metrics

Monitoring the logging system itself prevents silent log loss.

// Exposing Logback metrics via Micrometer

package com.example.logging.metrics;

import ch.qos.logback.classic.Logger;

import ch.qos.logback.classic.LoggerContext;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.Appender;

import ch.qos.logback.classic.AsyncAppender;

import io.micrometer.core.instrument.Gauge;

import io.micrometer.core.instrument.MeterRegistry;

import org.slf4j.LoggerFactory;

import org.springframework.stereotype.Component;

import jakarta.annotation.PostConstruct;

import java.util.Iterator;

@Component

public class LoggingMetrics {

private final MeterRegistry registry;

public LoggingMetrics(MeterRegistry registry) {

this.registry = registry;

}

@PostConstruct

void registerMetrics() {

LoggerContext context = (LoggerContext) LoggerFactory.getILoggerFactory();

Logger rootLogger = context.getLogger(Logger.ROOT_LOGGER_NAME);

// Iterate through appenders to find AsyncAppenders

Iterator<Appender<ILoggingEvent>> it = rootLogger.iteratorForAppenders();

while (it.hasNext()) {

Appender<ILoggingEvent> appender = it.next();

if (appender instanceof AsyncAppender asyncAppender) {

registerAsyncMetrics(asyncAppender);

}

}

}

private void registerAsyncMetrics(AsyncAppender appender) {

String appenderName = appender.getName();

// Current queue size

Gauge.builder("logback.async.queue.size", appender, AsyncAppender::getQueueSize)

.tag("appender", appenderName)

.description("Current async appender queue size")

.register(registry);

// Remaining capacity

Gauge.builder("logback.async.queue.remaining", appender, AsyncAppender::getRemainingCapacity)

.tag("appender", appenderName)

.description("Remaining capacity in async queue")

.register(registry);

// Number of dropped logs

Gauge.builder("logback.async.discarded", appender, AsyncAppender::getNumberOfElementsInQueue)

.tag("appender", appenderName)

.description("Number of discarded log events")

.register(registry);

}

}A Prometheus alert on logback.async.queue.remaining < 100 warns of log loss risks.

ELK Stack Integration

Filebeat Configuration

Filebeat collects JSON files and sends them to Elasticsearch without transformation.

# filebeat.yml

# Filebeat configuration for Spring Boot JSON logs

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/*/application.json

# Automatic JSON parsing

json:

keys_under_root: true

overwrite_keys: true

add_error_key: true

message_key: message

processors:

# Add Kubernetes metadata if available

- add_kubernetes_metadata:

host: ${NODE_NAME}

matchers:

- logs_path:

logs_path: "/var/log/containers/"

# Parse timestamp

- timestamp:

field: "@timestamp"

layouts:

- '2006-01-02T15:04:05.000Z'

- '2006-01-02T15:04:05.000-07:00'

test:

- '2026-03-27T10:15:32.456Z'

output.elasticsearch:

hosts: ["elasticsearch:9200"]

index: "logs-%{[service]}-%{+yyyy.MM.dd}"

pipeline: "spring-boot-logs"

setup.template:

name: "logs"

pattern: "logs-*"Elasticsearch Pipeline for Enrichment

// PUT _ingest/pipeline/spring-boot-logs

{

"description": "Spring Boot logs enrichment",

"processors": [

{

"geoip": {

"field": "client.ip",

"target_field": "client.geo",

"ignore_missing": true

}

},

{

"user_agent": {

"field": "user_agent.original",

"target_field": "user_agent",

"ignore_missing": true

}

},

{

"set": {

"field": "event.ingested",

"value": "{{_ingest.timestamp}}"

}

},

{

"script": {

"description": "Classify log level severity",

"source": """

def level = ctx.level;

if (level == 'ERROR') ctx.severity = 4;

else if (level == 'WARN') ctx.severity = 3;

else if (level == 'INFO') ctx.severity = 2;

else ctx.severity = 1;

"""

}

}

]

}Production Best Practices

Information to Include Systematically

Every log should contain minimum information for debugging and correlation.

// Helper for consistent structured logs

package com.example.logging;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import java.util.Map;

import java.util.function.Supplier;

public final class StructuredLogger {

private final Logger delegate;

private StructuredLogger(Class<?> clazz) {

this.delegate = LoggerFactory.getLogger(clazz);

}

public static StructuredLogger getLogger(Class<?> clazz) {

return new StructuredLogger(clazz);

}

// Log with temporary business context

public void info(String message, Map<String, String> context) {

try {

context.forEach(MDC::put);

delegate.info(message);

} finally {

context.keySet().forEach(MDC::remove);

}

}

// Log with supplier for lazy evaluation

public void debug(Supplier<String> messageSupplier, Map<String, String> context) {

if (delegate.isDebugEnabled()) {

try {

context.forEach(MDC::put);

delegate.debug(messageSupplier.get());

} finally {

context.keySet().forEach(MDC::remove);

}

}

}

// Error log with full context

public void error(String message, Throwable t, Map<String, String> context) {

try {

context.forEach(MDC::put);

delegate.error(message, t);

} finally {

context.keySet().forEach(MDC::remove);

}

}

}// Usage in business code

private static final StructuredLogger log = StructuredLogger.getLogger(PaymentService.class);

public void processPayment(Payment payment) {

log.info("Processing payment", Map.of(

"paymentId", payment.getId(),

"amount", String.valueOf(payment.getAmount()),

"currency", payment.getCurrency(),

"method", payment.getMethod().name()

));

}Sensitive Information to Exclude

Logs should never contain personal or sensitive data.

// Sensitive data masking filter

package com.example.logging.filter;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.filter.Filter;

import ch.qos.logback.core.spi.FilterReply;

import java.util.regex.Pattern;

public class SensitiveDataFilter extends Filter<ILoggingEvent> {

// Sensitive data patterns to mask

private static final Pattern EMAIL_PATTERN =

Pattern.compile("[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\\.[a-zA-Z]{2,}");

private static final Pattern CREDIT_CARD_PATTERN =

Pattern.compile("\\b\\d{4}[- ]?\\d{4}[- ]?\\d{4}[- ]?\\d{4}\\b");

private static final Pattern PASSWORD_PATTERN =

Pattern.compile("(?i)(password|pwd|secret|token)[\"']?\\s*[:=]\\s*[\"']?[^\\s,}\"']+");

private static final Pattern PHONE_PATTERN =

Pattern.compile("\\+?\\d{1,3}[- ]?\\d{6,14}");

@Override

public FilterReply decide(ILoggingEvent event) {

// Accept all logs but modify the message

// Note: for real masking, use a custom converter

return FilterReply.NEUTRAL;

}

// Utility method to mask data

public static String maskSensitiveData(String input) {

if (input == null) return null;

String result = input;

result = EMAIL_PATTERN.matcher(result).replaceAll("[EMAIL_MASKED]");

result = CREDIT_CARD_PATTERN.matcher(result).replaceAll("[CARD_MASKED]");

result = PASSWORD_PATTERN.matcher(result).replaceAll("$1=[REDACTED]");

result = PHONE_PATTERN.matcher(result).replaceAll("[PHONE_MASKED]");

return result;

}

}Logs containing personal data are subject to GDPR. IP addresses, emails, and user identifiers require a retention policy and potentially consent.

Appropriate Log Levels

// Appropriate log level guidelines

package com.example.logging;

public class LogLevelGuidelines {

// ERROR: Failure requiring intervention

// - Unrecoverable exceptions

// - Critical transaction failures

// - External service unavailability

log.error("Payment gateway unreachable after 3 retries", exception);

// WARN: Abnormal but handled situation

// - Retry in progress

// - Performance degradation

// - Resources near limits

log.warn("Database connection pool at 85% capacity");

// INFO: Significant business events

// - Transaction start/end

// - Important state changes

// - Key user actions

log.info("Order {} shipped to customer {}", orderId, customerId);

// DEBUG: Diagnostic information

// - Execution details

// - Important variable values

// - Branching decisions

log.debug("Cache miss for key {}, fetching from database", cacheKey);

// TRACE: Very fine details

// - Method entry/exit

// - Complete object contents

// - Loops and iterations

log.trace("Processing item {} of {}", index, total);

}Testing and Validating Logs

Unit Testing JSON Structure

// Structured log validation tests

package com.example.logging;

import ch.qos.logback.classic.Logger;

import ch.qos.logback.classic.spi.ILoggingEvent;

import ch.qos.logback.core.read.ListAppender;

import com.fasterxml.jackson.databind.JsonNode;

import com.fasterxml.jackson.databind.ObjectMapper;

import org.junit.jupiter.api.BeforeEach;

import org.junit.jupiter.api.Test;

import org.slf4j.LoggerFactory;

import org.slf4j.MDC;

import static org.assertj.core.api.Assertions.assertThat;

class StructuredLoggingTest {

private ListAppender<ILoggingEvent> listAppender;

private Logger logger;

private ObjectMapper objectMapper;

@BeforeEach

void setUp() {

logger = (Logger) LoggerFactory.getLogger(StructuredLoggingTest.class);

listAppender = new ListAppender<>();

listAppender.start();

logger.addAppender(listAppender);

objectMapper = new ObjectMapper();

}

@Test

void shouldIncludeMdcFieldsInLog() {

// Given

MDC.put("traceId", "test-trace-123");

MDC.put("userId", "user-456");

// When

logger.info("Test message with MDC context");

// Then

ILoggingEvent event = listAppender.list.get(0);

assertThat(event.getMDCPropertyMap())

.containsEntry("traceId", "test-trace-123")

.containsEntry("userId", "user-456");

MDC.clear();

}

@Test

void shouldLogExceptionWithStackTrace() {

// Given

Exception testException = new RuntimeException("Test error");

// When

logger.error("Operation failed", testException);

// Then

ILoggingEvent event = listAppender.list.get(0);

assertThat(event.getThrowableProxy()).isNotNull();

assertThat(event.getThrowableProxy().getMessage()).isEqualTo("Test error");

}

}Conclusion

Structured JSON logs transform Spring Boot application observability:

✅ Queryable: every field becomes filterable in Elasticsearch or CloudWatch

✅ Correlatable: MDC propagates trace identifiers between services

✅ Performant: asynchronous appender decouples logging from processing

✅ Secure: sensitive data masking ensures GDPR compliance

✅ Integrated: native compatibility with ELK Stack, Datadog, Splunk

✅ Alertable: structured fields enable precise alert rules

✅ Maintainable: JSON format eliminates fragile parsing regex

This approach forms the foundation of modern observability, alongside metrics (Micrometer) and distributed tracing (OpenTelemetry).

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Tags

Share

Related articles

Spring Boot Actuator: Production Monitoring with Micrometer and Prometheus

Complete Spring Boot Actuator guide for production monitoring. Micrometer configuration, Prometheus metrics, custom endpoints and alerting setup.

Spring Kafka: Event-Driven Architecture with Resilient Consumers

Complete Spring Kafka guide for event-driven architectures. Configuration, resilient consumers, retry policies, dead letter queues and production patterns for distributed applications.

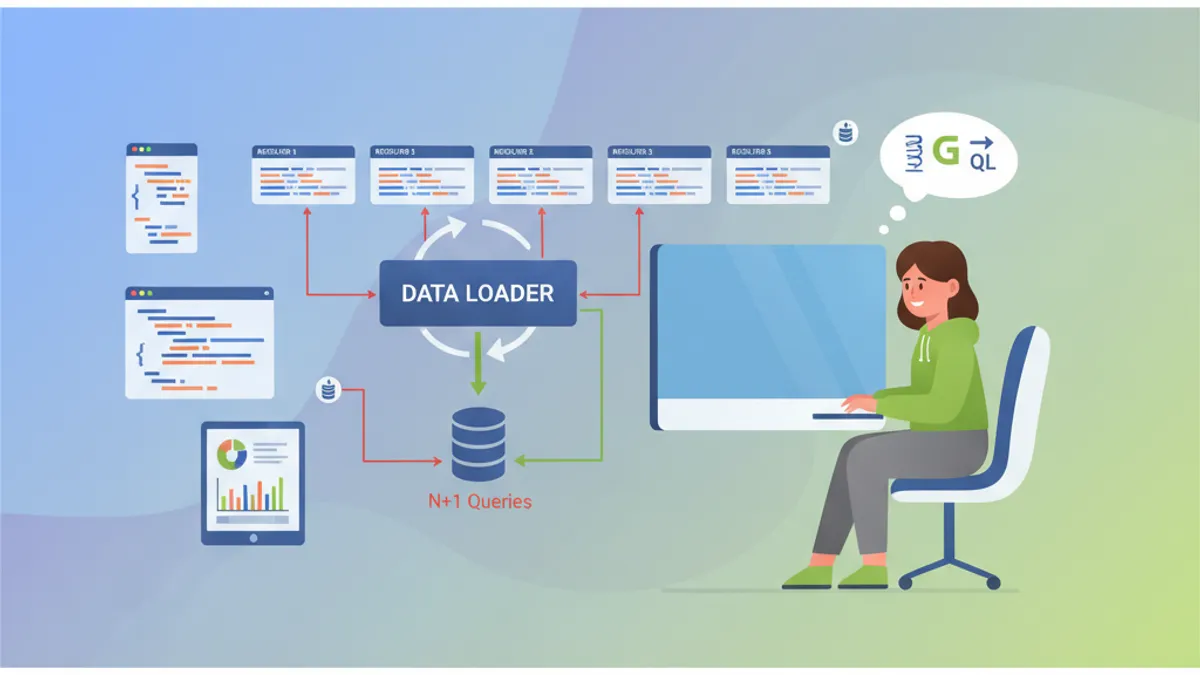

Spring GraphQL Interview: Resolvers, DataLoaders and N+1 Problem Solutions

Prepare for Spring GraphQL interviews with this complete guide. Resolvers, DataLoaders, N+1 problem handling, mutations, and best practices for technical questions.