ETL vs ELT in 2026: Data Pipeline Architecture Explained

ETL vs ELT comparison for modern data pipelines. Understand the architectural differences, performance trade-offs, and when to use each approach with Snowflake, BigQuery, and dbt in 2026.

Comprehensive Data Engineering curriculum covering the entire data production chain. From environment setup with Docker and GCP to pipeline orchestration with Airflow and dbt, through Data Warehouse creation with BigQuery and PostgreSQL. Learn to handle data streaming with PySpark, Pub/Sub and Apache Beam, and deploy to production with Kubernetes and Terraform. Master CI/CD best practices, monitoring and modern data architectures.

Development environments: Linux, Git, GitHub, VS Code, advanced Python

CI/CD and code quality: Ruff, Pylint, Poetry, GitHub Actions

Containerization with Docker and Docker Compose

APIs with FastAPI: design, deployment, documentation

Data Lake: ingestion, storage, raw data organization

Data Warehouse with BigQuery: schemas, partitioning, optimization

PostgreSQL: setup, administration, comparison with managed solutions

Data ingestion with Fivetran and Airbyte

Transformation with dbt: models, tests, documentation, modularity

Orchestration with Apache Airflow: DAGs, scheduling, monitoring

Big Data with PySpark: large-scale transformations

Data streaming: Google Pub/Sub, Apache Beam, Dataflow

Kubernetes: container deployment, scaling, production clusters

Infrastructure as Code with Terraform

Advanced databases: GraphDB, Document DBs, Wide Column DBs

Logging, monitoring and pipeline observability

The most important concepts to understand this technology and ace your interviews

Linux & Shell: essential commands, bash scripting, permissions, cron jobs

Git & GitHub: branching, merge, rebase, pull requests, CI/CD workflows

Advanced Python: OOP, decorators, generators, context managers, typing, async/await

CI/CD: linting (Ruff, Pylint), packaging (Poetry), tests, GitHub Actions, pipelines

Docker: Dockerfile, images, containers, volumes, networks, multi-stage builds

Docker Compose: multi-container services, dependencies, healthchecks, local orchestration

FastAPI: routes, Pydantic models, dependencies, middleware, deployment

Advanced SQL: window functions, CTEs, analytical queries, optimization, indexing

BigQuery: serverless architecture, partitioning, clustering, costs, UDFs, federated queries

PostgreSQL: configuration, replication, indexing (B-tree, GIN, GiST), VACUUM, EXPLAIN ANALYZE

Data Modeling: star schema, fact/dimension tables, normalization, SCD, data vault

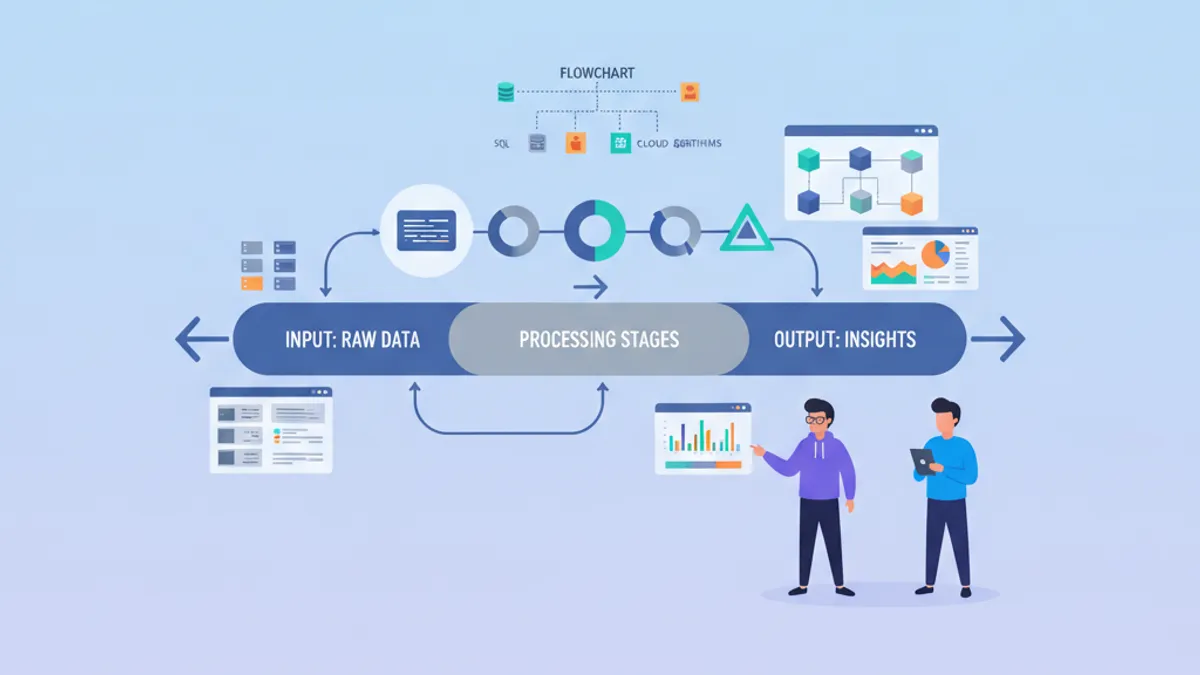

ELT vs ETL vs ETLT: patterns, trade-offs, architecture choices

Fivetran & Airbyte: connectors, sync modes, CDC, schema evolution

dbt: models, sources, refs, tests, snapshots, incremental models, Jinja macros

Apache Airflow: DAGs, operators, sensors, XCom, connections, pools, task dependencies

PySpark: RDD vs DataFrame, transformations, actions, partitioning, broadcast variables

Streaming: Pub/Sub (topics, subscriptions), Apache Beam (PCollections, transforms, windowing), Dataflow

Kubernetes: pods, deployments, services, ingress, ConfigMaps, Secrets, Helm, scaling

Terraform: providers, resources, state, modules, plan/apply, infrastructure as code

IAM & security: least privilege principles, service accounts, GCP roles

NoSQL databases: GraphDB (Neo4j), Document DBs (MongoDB, Firestore), Wide Column (Cassandra, Bigtable)

Data Architecture: Data Lake vs Data Warehouse vs Data Lakehouse, Data Mesh, Data Contracts

Monitoring & observability: logging, metrics, alerting, SLA/SLO/SLI, data quality checks

Discover our latest articles and guides on Data Engineering

ETL vs ELT comparison for modern data pipelines. Understand the architectural differences, performance trade-offs, and when to use each approach with Snowflake, BigQuery, and dbt in 2026.

A hands-on PySpark tutorial covering DataFrame operations, ETL pipeline construction, and Spark 4.0 features. Includes production-ready code examples for data engineers preparing for technical interviews.

The 25 most asked data engineering interview questions in 2026, covering SQL, data pipelines, ETL/ELT, Spark, Kafka, data modeling, and system design with detailed answers.