Async/Await in Rust: Tokio, Futures and Asynchronous Concurrency Explained

Rust async/await deep dive covering Tokio runtime, Futures trait, task spawning, structured concurrency, and real-world patterns for building high-performance asynchronous applications.

Rust async/await provides zero-cost asynchronous programming, but unlike languages such as JavaScript or Python, Rust does not bundle a runtime. The Future trait, the async/await keywords, and an external executor like Tokio form a three-layer system that gives full control over how concurrent work gets scheduled and executed.

A Rust async fn returns a state machine that implements Future. Nothing executes until an executor (like Tokio) polls that future. This lazy evaluation model eliminates hidden allocations and gives the compiler enough information to optimize aggressively.

How Rust Futures Differ from Other Languages

In JavaScript, calling an async function immediately starts execution and returns a Promise. In Rust, calling an async fn does nothing — it constructs a Future value that sits idle until polled. This distinction has practical consequences.

The Future trait defines a single method:

// core::future::Future

trait Future {

type Output;

fn poll(self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll<Self::Output>;

}Poll::Ready(value) signals completion. Poll::Pending tells the executor to park the task and wake it later through the Waker stored in Context. The executor never busy-waits — it relies on OS-level mechanisms (epoll on Linux, kqueue on macOS) to know when I/O is ready.

This poll-based model means Rust futures are state machines compiled at build time, not heap-allocated callback chains. The compiler transforms each .await point into a state variant, producing code that rivals hand-written state machines in performance.

Setting Up Tokio as the Async Runtime

Tokio is the most widely adopted async runtime in the Rust ecosystem. As of 2026, Tokio 2.0 introduced an improved work-stealing scheduler and hierarchical timing wheel that reduce overhead by roughly 40% compared to earlier versions.

A minimal setup requires two things in Cargo.toml:

# Cargo.toml

[dependencies]

tokio = { version = "2", features = ["rt-multi-thread", "macros", "net", "time"] }The #[tokio::main] macro transforms the main function into an async entry point:

#[tokio::main]

async fn main() {

let result = fetch_data().await;

println!("Got: {result}");

}

async fn fetch_data() -> String {

// Simulate async I/O

tokio::time::sleep(std::time::Duration::from_millis(100)).await;

String::from("data loaded")

}Behind the scenes, #[tokio::main] expands to a Runtime::new() call with a multi-threaded scheduler. For applications that only need a single thread (CLI tools, scripts), #[tokio::main(flavor = "current_thread")] avoids the overhead of thread synchronization.

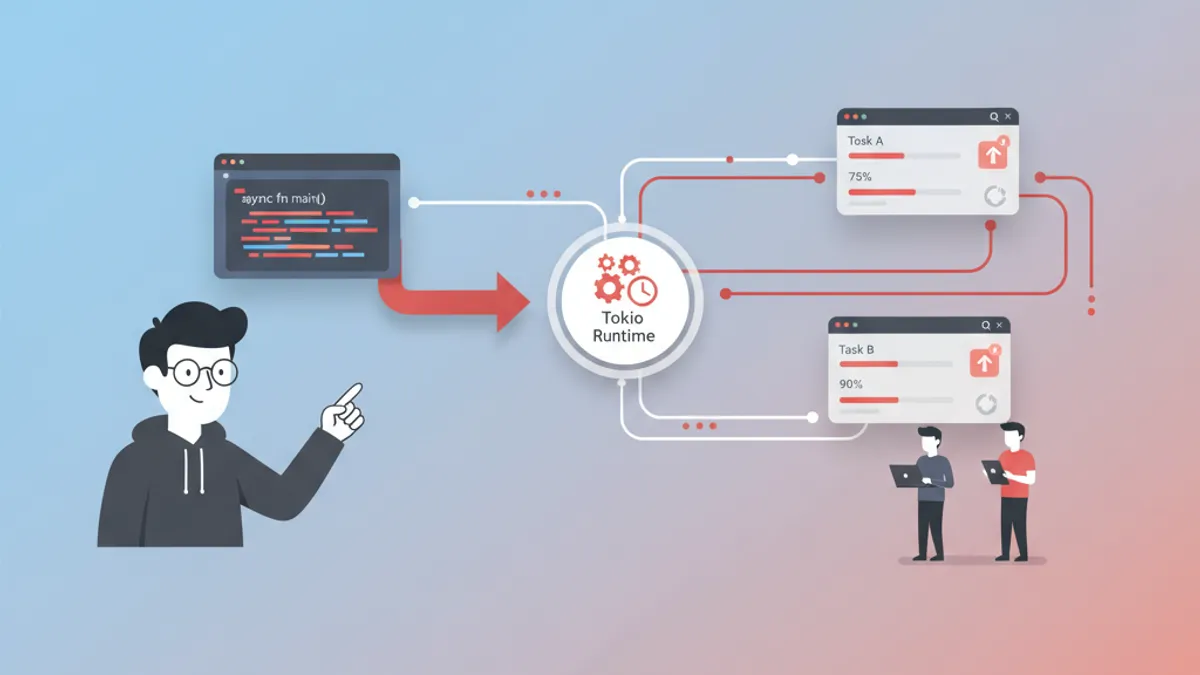

Task Spawning and Structured Concurrency

Sequential .await calls run one after another. To execute work concurrently, Tokio provides tokio::spawn, which schedules a future on the runtime's thread pool:

use tokio::task::JoinHandle;

#[tokio::main]

async fn main() {

// Spawn two independent tasks

let handle_a: JoinHandle<u32> = tokio::spawn(async {

expensive_computation("dataset_a").await

});

let handle_b: JoinHandle<u32> = tokio::spawn(async {

expensive_computation("dataset_b").await

});

// Await both results

let (result_a, result_b) = (

handle_a.await.expect("task A panicked"),

handle_b.await.expect("task B panicked"),

);

println!("Results: {result_a}, {result_b}");

}

async fn expensive_computation(name: &str) -> u32 {

tokio::time::sleep(std::time::Duration::from_secs(1)).await;

println!("{name} done");

42

}Both tasks run in parallel across available threads. The JoinHandle returned by spawn acts as a future that resolves when the spawned task completes.

A critical constraint: tokio::spawn requires the future to be 'static — it cannot borrow local variables. This forces explicit ownership transfer, which prevents data races at compile time.

Spawned tasks must be Send + 'static. Data shared across .await points inside a spawned task needs to be either Cloned into the task or wrapped in Arc. Attempting to borrow stack-local references across a spawn boundary triggers a compile error.

Joining Multiple Futures with tokio::join! and tokio::select!

For a fixed number of concurrent operations, tokio::join! runs all futures to completion and returns a tuple of results:

use tokio::time::{sleep, Duration};

#[tokio::main]

async fn main() {

// All three run concurrently, total time ~200ms (not 600ms)

let (users, orders, inventory) = tokio::join!(

fetch_users(),

fetch_orders(),

fetch_inventory()

);

println!("Users: {}, Orders: {}, Stock: {}", users.len(), orders.len(), inventory);

}

async fn fetch_users() -> Vec<String> {

sleep(Duration::from_millis(200)).await;

vec!["Alice".into(), "Bob".into()]

}

async fn fetch_orders() -> Vec<String> {

sleep(Duration::from_millis(150)).await;

vec!["ORD-001".into()]

}

async fn fetch_inventory() -> u32 {

sleep(Duration::from_millis(100)).await;

84

}Unlike spawn, join! does not require 'static — the futures borrow from the enclosing scope freely. This makes it the preferred choice when all branches need to complete.

tokio::select! waits for the first future to complete and cancels the rest:

use tokio::time::{sleep, Duration};

#[tokio::main]

async fn main() {

tokio::select! {

val = fetch_from_cache() => {

println!("Cache hit: {val}");

}

val = fetch_from_database() => {

println!("DB result: {val}");

}

}

}

async fn fetch_from_cache() -> String {

sleep(Duration::from_millis(5)).await;

"cached_value".into()

}

async fn fetch_from_database() -> String {

sleep(Duration::from_millis(50)).await;

"db_value".into()

}The losing branch gets dropped, which runs destructors and frees resources. This pattern is essential for implementing timeouts, cancellation, and racing between data sources.

Ready to ace your Rust interviews?

Practice with our interactive simulators, flashcards, and technical tests.

Error Handling in Async Rust

Async functions compose naturally with Rust's Result type. The ? operator works inside async fn just like synchronous code:

use std::io;

#[derive(Debug)]

enum AppError {

Network(reqwest::Error),

Parse(serde_json::Error),

Io(io::Error),

}

async fn load_config(url: &str) -> Result<Config, AppError> {

let response = reqwest::get(url)

.await

.map_err(AppError::Network)?;

let text = response.text()

.await

.map_err(AppError::Network)?;

let config: Config = serde_json::from_str(&text)

.map_err(AppError::Parse)?;

Ok(config)

}

#[derive(serde::Deserialize)]

struct Config {

db_url: String,

port: u16,

}Each .await point is a potential suspension and a potential error site. Mapping errors explicitly keeps the async control flow readable and avoids the "callback hell" found in other async models.

Channels for Async Communication Between Tasks

Tokio provides async-aware channels that let spawned tasks communicate without shared mutable state:

use tokio::sync::mpsc;

#[tokio::main]

async fn main() {

// Bounded channel with capacity 32

let (tx, mut rx) = mpsc::channel::<String>(32);

// Producer task

let producer = tokio::spawn(async move {

for i in 0..5 {

tx.send(format!("message-{i}")).await.unwrap();

tokio::time::sleep(std::time::Duration::from_millis(10)).await;

}

// tx dropped here, closing the channel

});

// Consumer reads until channel closes

while let Some(msg) = rx.recv().await {

println!("Received: {msg}");

}

producer.await.unwrap();

}mpsc::channel creates a multi-producer, single-consumer channel. For single-producer scenarios, oneshot::channel provides a lighter-weight option. For broadcasting to multiple consumers, broadcast::channel clones messages to every active receiver.

The bounded capacity (32 in this example) applies backpressure: when the buffer fills, send().await suspends the producer until the consumer catches up. This prevents unbounded memory growth in high-throughput pipelines.

Real-World Pattern: Concurrent HTTP Requests with Rate Limiting

A common production scenario involves making many HTTP requests concurrently while respecting rate limits. tokio::sync::Semaphore controls the degree of parallelism:

use std::sync::Arc;

use tokio::sync::Semaphore;

async fn fetch_all(urls: Vec<String>, max_concurrent: usize) -> Vec<Result<String, String>> {

let semaphore = Arc::new(Semaphore::new(max_concurrent));

let mut handles = Vec::new();

for url in urls {

let sem = Arc::clone(&semaphore);

let handle = tokio::spawn(async move {

// Acquire permit before making request

let _permit = sem.acquire().await.unwrap();

reqwest::get(&url)

.await

.map(|r| r.status().to_string())

.map_err(|e| e.to_string())

// permit dropped here, allowing next task to proceed

});

handles.push(handle);

}

let mut results = Vec::new();

for handle in handles {

results.push(handle.await.unwrap());

}

results

}The semaphore limits active requests to max_concurrent. All tasks are spawned immediately, but only N run their HTTP call at any given time. When a task finishes and drops its permit, another task wakes up and proceeds.

tokio::join! suits a known, fixed set of futures. tokio::spawn + Semaphore suits a dynamic, potentially large collection. select! suits racing or timeout scenarios. Picking the right primitive avoids both under-utilization and resource exhaustion.

Pin and Unpin: Why Async Rust Needs Them

The Pin type appears in the Future::poll signature for a reason. Async state machines may contain self-referential fields — a reference in one state pointing to data stored in another part of the same struct. Moving the struct in memory would invalidate that reference.

Pin<&mut Self> guarantees the future will not be moved after it starts polling. Most user-written futures are Unpin (movable), and the compiler handles pinning automatically. Manual Pin management only becomes necessary when implementing custom Future types or working with async_stream patterns.

For day-to-day async Rust, the practical takeaway: Box::pin(future) converts any future into a pinned, heap-allocated form that can be stored in collections or returned from trait methods.

Performance Characteristics and When to Use Async

Async Rust shines for I/O-bound workloads: HTTP servers, database clients, message brokers, file watchers. Each task uses only a few hundred bytes of stack (compared to 8 MB default for OS threads), making it feasible to run hundreds of thousands of concurrent tasks on a single machine.

For CPU-bound work, tokio::task::spawn_blocking offloads computation to a dedicated thread pool, preventing it from starving the async executor:

#[tokio::main]

async fn main() {

let hash = tokio::task::spawn_blocking(|| {

// CPU-intensive work runs on a blocking thread

compute_hash(b"large dataset")

})

.await

.unwrap();

println!("Hash: {hash}");

}

fn compute_hash(data: &[u8]) -> String {

use std::collections::hash_map::DefaultHasher;

use std::hash::{Hash, Hasher};

let mut hasher = DefaultHasher::new();

data.hash(&mut hasher);

format!("{:x}", hasher.finish())

}Mixing spawn_blocking with async I/O keeps the event loop responsive while still leveraging all CPU cores for heavy computation.

Ready to ace your Rust interviews?

Practice with our interactive simulators, flashcards, and technical tests.

Conclusion

- Rust futures are lazy state machines — nothing runs until an executor polls them, giving full control over scheduling and resource usage

- Tokio 2.0 provides the runtime, with

spawnfor independent tasks,join!for parallel completion, andselect!for racing - The

Send + 'staticconstraint on spawned tasks catches data races at compile time, trading some ergonomic friction for guaranteed thread safety - Channels (

mpsc,oneshot,broadcast) decouple task communication without shared mutable state Semaphoreand bounded channels provide backpressure, preventing resource exhaustion in production systemsspawn_blockingbridges the gap between async I/O and CPU-bound computation- Mastering these primitives — Future trait, Tokio runtime, and ownership rules — unlocks Rust's potential for building high-throughput, low-latency services

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Tags

Share

Related articles

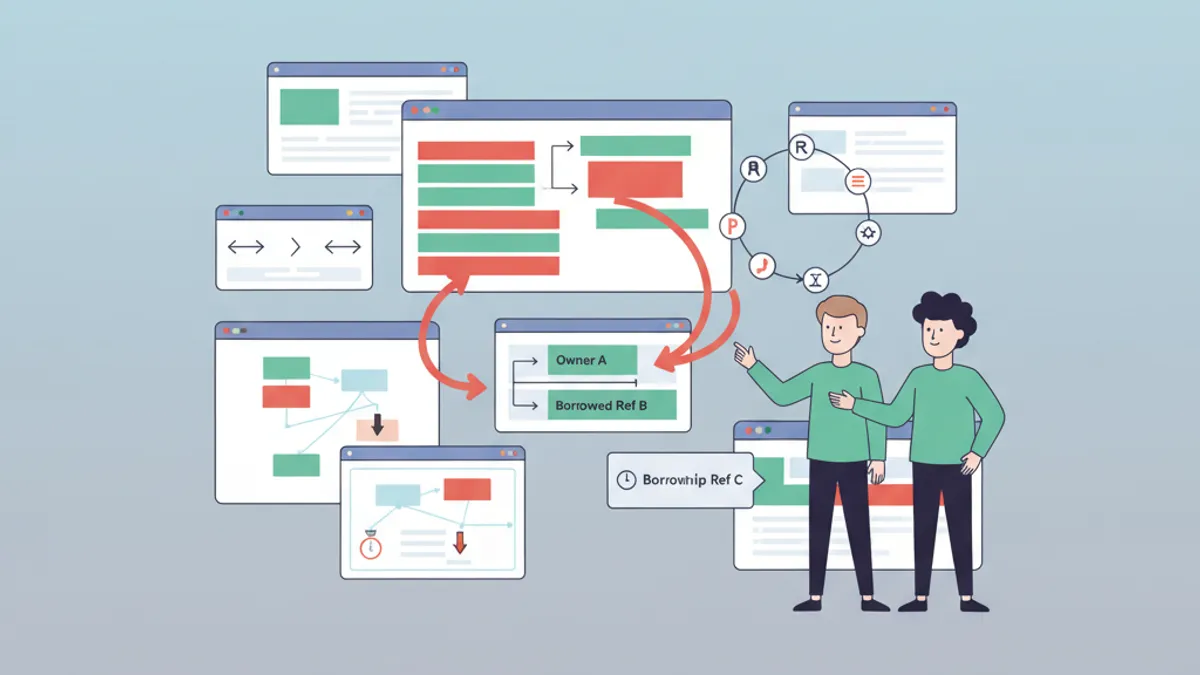

Rust Ownership and Borrowing: The Guide That Demystifies Everything

Master Rust ownership and borrowing with practical examples. Understand move semantics, references, lifetimes, and the borrow checker to write safe, efficient Rust code.

Ownership and Borrowing in Rust: Complete Guide

Master Rust's ownership and borrowing system. Understand property rules, references, lifetimes, and advanced memory management patterns.

Rust Interview Questions: Complete Guide 2026

The 25 most common Rust interview questions. Ownership, borrowing, lifetimes, traits, async and concurrency with detailed answers and code examples.