Go Concurrency: Goroutines and Channels - Complete Guide

Master Go concurrency with goroutines and channels. Advanced patterns, synchronization, select statements, and best practices with detailed code examples.

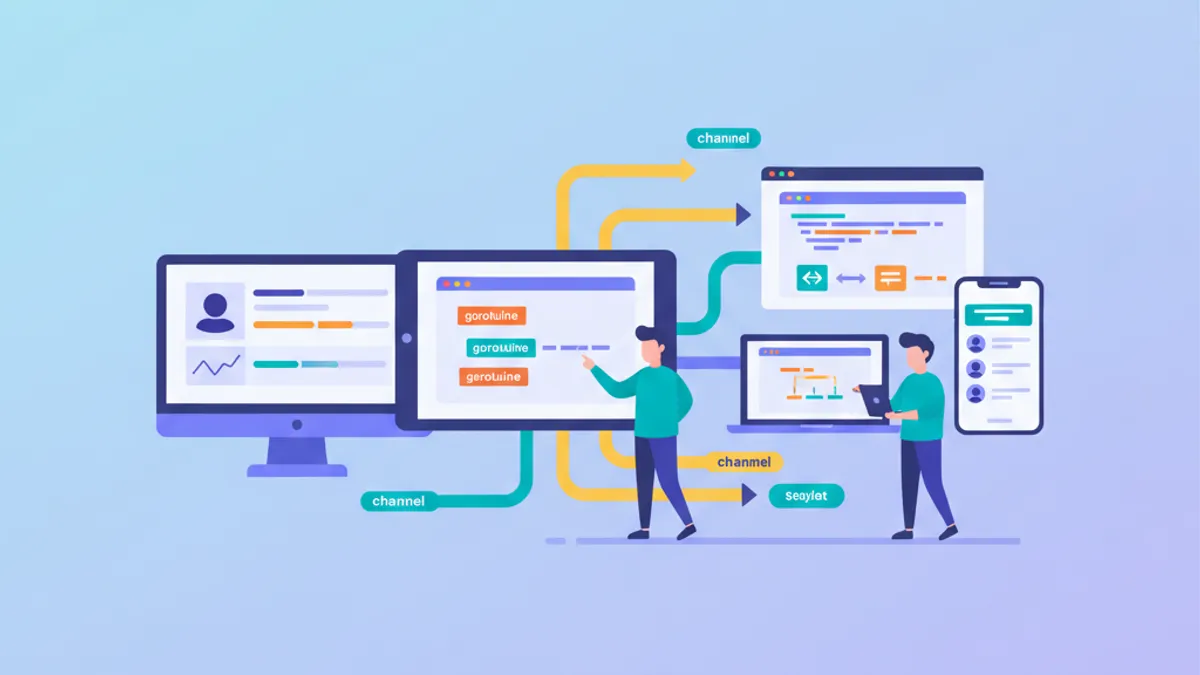

Concurrency stands as one of Go's greatest strengths. Unlike other languages where multithreading remains complex, Go provides an elegant model based on goroutines and channels that significantly simplifies concurrent application development.

"Don't communicate by sharing memory; share memory by communicating." This fundamental principle guides all concurrency design in Go.

Understanding Goroutines

Goroutines are lightweight threads managed by the Go runtime. They consume roughly 2 KB of stack space (compared to several MB for OS threads) and enable thousands of concurrent tasks without system overhead.

Launching a goroutine requires simply placing the go keyword before a function call. The runtime handles scheduling and distribution across available threads.

package main

import (

"fmt"

"time"

)

// fetchData simulates a network request

func fetchData(id int) {

// Simulates network delay

time.Sleep(100 * time.Millisecond)

fmt.Printf("Data %d fetched\n", id)

}

func main() {

// Sequential execution - 500ms total

start := time.Now()

for i := 1; i <= 5; i++ {

fetchData(i)

}

fmt.Printf("Sequential: %v\n", time.Since(start))

// Concurrent execution - ~100ms total

start = time.Now()

for i := 1; i <= 5; i++ {

go fetchData(i) // Execute as goroutine

}

time.Sleep(150 * time.Millisecond) // Wait for completion

fmt.Printf("Concurrent: %v\n", time.Since(start))

}Concurrent execution reduces total time from 500ms to approximately 100ms. However, using time.Sleep for goroutine synchronization is not a best practice. Channels offer an elegant solution.

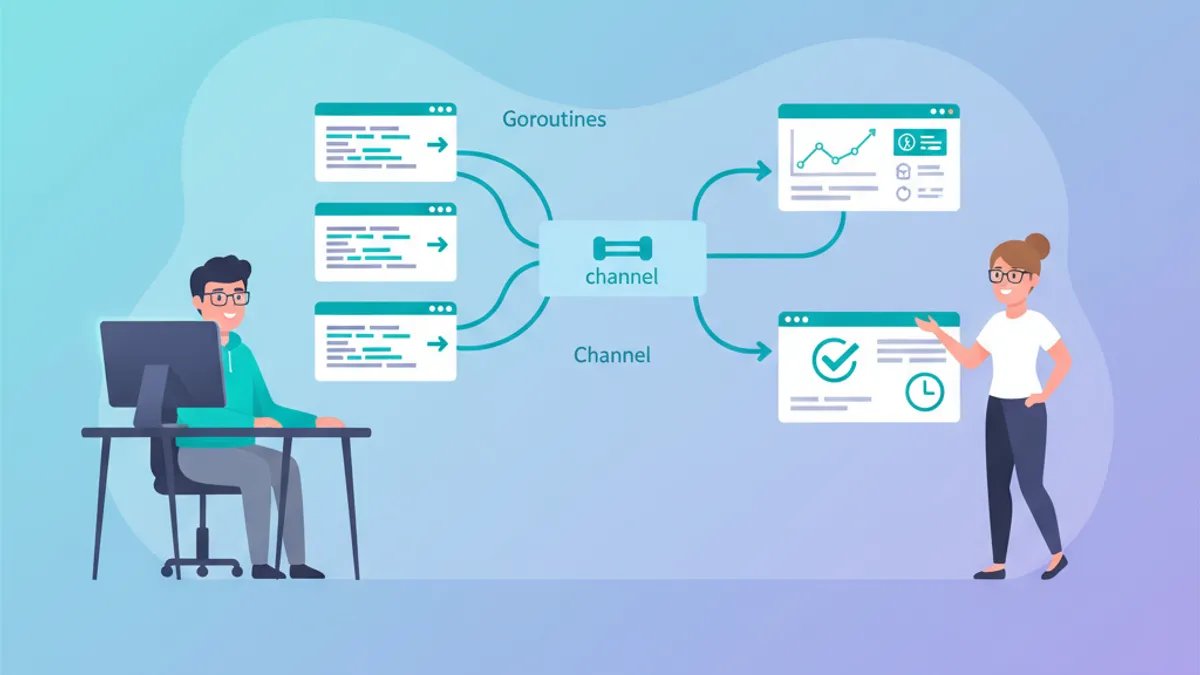

Channels: Communication Between Goroutines

A channel is a typed conduit for sending and receiving values between goroutines. Channels ensure synchronization: a sending goroutine waits until another receives, and vice versa.

Channel creation uses the make function. The <- operator sends and receives data depending on its placement relative to the channel.

package main

import "fmt"

// worker performs computation and returns result via channel

func worker(id int, jobs <-chan int, results chan<- int) {

// Receives jobs until channel closes

for job := range jobs {

result := job * 2 // Processing

results <- result // Send result

}

}

func main() {

// Create channels

jobs := make(chan int, 10) // Buffered channel

results := make(chan int, 10)

// Start 3 workers

for w := 1; w <= 3; w++ {

go worker(w, jobs, results)

}

// Send 5 jobs

for j := 1; j <= 5; j++ {

jobs <- j

}

close(jobs) // Signal end of jobs

// Collect results

for r := 1; r <= 5; r++ {

result := <-results

fmt.Printf("Result: %d\n", result)

}

}Directional channels (<-chan for receive, chan<- for send) enhance code safety by limiting possible operations.

Buffered vs Unbuffered Channels

The distinction between these channel types directly affects goroutine synchronization behavior.

Unbuffered channels block the sender until a receiver is ready. Buffered channels allow sending up to N values without blocking, where N represents the buffer capacity.

package main

import "fmt"

func main() {

// Unbuffered channel - strict synchronization

unbuffered := make(chan string)

go func() {

unbuffered <- "message" // Blocks until received

}()

msg := <-unbuffered // Unblocks the send

fmt.Println(msg)

// Buffered channel - capacity of 2

buffered := make(chan int, 2)

// These sends don't block

buffered <- 1

buffered <- 2

// buffered <- 3 // Would block because buffer is full

fmt.Println(<-buffered) // 1

fmt.Println(<-buffered) // 2

// Check capacity

fmt.Printf("Length: %d, Capacity: %d\n",

len(buffered), cap(buffered))

}Buffered channels decouple producers and consumers, while unbuffered ones guarantee point-to-point synchronization.

A deadlock occurs when all goroutines are blocked waiting. The Go runtime detects this and terminates the program with an explicit error message.

Select: Channel Multiplexing

The select statement waits on multiple channel operations simultaneously. It resembles a switch statement for concurrent communications.

This construct is essential for handling timeouts, cancellations, and multiple communications without blocking indefinitely on a single channel.

package main

import (

"fmt"

"time"

)

// fetchAPI simulates an API call with variable delay

func fetchAPI(name string, delay time.Duration, ch chan<- string) {

time.Sleep(delay)

ch <- fmt.Sprintf("%s: data received", name)

}

func main() {

api1 := make(chan string)

api2 := make(chan string)

// Launch two API calls in parallel

go fetchAPI("API-1", 100*time.Millisecond, api1)

go fetchAPI("API-2", 200*time.Millisecond, api2)

// Global timeout of 150ms

timeout := time.After(150 * time.Millisecond)

// Collect results with timeout

for i := 0; i < 2; i++ {

select {

case result := <-api1:

fmt.Println(result)

case result := <-api2:

fmt.Println(result)

case <-timeout:

fmt.Println("Timeout - operation cancelled")

return

}

}

}The select chooses the first ready channel. If multiple are ready, the choice is pseudo-random to prevent starvation.

Ready to ace your Go interviews?

Practice with our interactive simulators, flashcards, and technical tests.

Worker Pool Pattern

The worker pool pattern distributes tasks among multiple workers, limiting concurrency and optimizing resource usage. This pattern proves indispensable for processing large amounts of data.

The implementation relies on a shared jobs channel among workers and a results channel for collection.

package main

import (

"fmt"

"sync"

"time"

)

// Task represents a unit of work

type Task struct {

ID int

Data string

}

// Result contains the processing result

type Result struct {

TaskID int

Output string

}

// worker processes received tasks

func worker(id int, tasks <-chan Task, results chan<- Result, wg *sync.WaitGroup) {

defer wg.Done()

for task := range tasks {

// Simulate processing

time.Sleep(50 * time.Millisecond)

results <- Result{

TaskID: task.ID,

Output: fmt.Sprintf("Worker %d processed: %s", id, task.Data),

}

}

}

func main() {

const numWorkers = 3

const numTasks = 10

tasks := make(chan Task, numTasks)

results := make(chan Result, numTasks)

var wg sync.WaitGroup

// Start workers

for w := 1; w <= numWorkers; w++ {

wg.Add(1)

go worker(w, tasks, results, &wg)

}

// Send tasks

for i := 1; i <= numTasks; i++ {

tasks <- Task{ID: i, Data: fmt.Sprintf("task-%d", i)}

}

close(tasks)

// Close results channel after workers finish

go func() {

wg.Wait()

close(results)

}()

// Collect results

for result := range results {

fmt.Printf("Task %d: %s\n", result.TaskID, result.Output)

}

}The sync.WaitGroup coordinates waiting for all workers to finish before closing the results channel.

Fan-Out/Fan-In Pattern

This pattern distributes work among multiple goroutines (fan-out) then aggregates results (fan-in). It maximizes parallelism while simplifying result collection.

package main

import (

"fmt"

"sync"

)

// generate produces numbers on a channel

func generate(nums ...int) <-chan int {

out := make(chan int)

go func() {

for _, n := range nums {

out <- n

}

close(out)

}()

return out

}

// square computes the square of received numbers

func square(in <-chan int) <-chan int {

out := make(chan int)

go func() {

for n := range in {

out <- n * n

}

close(out)

}()

return out

}

// merge combines multiple channels into one (fan-in)

func merge(channels ...<-chan int) <-chan int {

out := make(chan int)

var wg sync.WaitGroup

// Output function for each channel

output := func(c <-chan int) {

defer wg.Done()

for n := range c {

out <- n

}

}

// Launch a goroutine per channel

wg.Add(len(channels))

for _, c := range channels {

go output(c)

}

// Close after all goroutines finish

go func() {

wg.Wait()

close(out)

}()

return out

}

func main() {

// Generate data

numbers := generate(1, 2, 3, 4, 5, 6, 7, 8)

// Fan-out: distribute to 3 workers

sq1 := square(numbers)

sq2 := square(numbers)

sq3 := square(numbers)

// Fan-in: aggregate results

for result := range merge(sq1, sq2, sq3) {

fmt.Println(result)

}

}This pattern excels for distributable CPU-bound operations and data processing pipelines.

Context for Cancellation and Deadlines

The context package standardizes cancellation, deadline, and value management across goroutines. Any long-running goroutine should accept a context as its first parameter.

package main

import (

"context"

"fmt"

"time"

)

// fetchWithContext simulates a cancellable request

func fetchWithContext(ctx context.Context, url string) (string, error) {

// Simulates a long operation

select {

case <-time.After(2 * time.Second):

return fmt.Sprintf("Data from %s", url), nil

case <-ctx.Done():

return "", ctx.Err() // context.Canceled or context.DeadlineExceeded

}

}

func main() {

// Context with 500ms timeout

ctx, cancel := context.WithTimeout(context.Background(), 500*time.Millisecond)

defer cancel() // Release resources

result, err := fetchWithContext(ctx, "https://api.example.com")

if err != nil {

fmt.Printf("Error: %v\n", err)

return

}

fmt.Println(result)

// Context with manual cancellation

ctx2, cancel2 := context.WithCancel(context.Background())

go func() {

time.Sleep(100 * time.Millisecond)

cancel2() // Explicit cancellation

}()

result, err = fetchWithContext(ctx2, "https://api2.example.com")

if err != nil {

fmt.Printf("Request cancelled: %v\n", err)

}

}Always call defer cancel() immediately after creating a context to avoid resource leaks.

Synchronization with sync.Mutex

While channels are preferred for communication, the sync package remains necessary for protecting concurrent access to shared data structures.

package main

import (

"fmt"

"sync"

)

// SafeCounter is a thread-safe counter

type SafeCounter struct {

mu sync.Mutex

value map[string]int

}

// Increment increments the value for a given key

func (c *SafeCounter) Increment(key string) {

c.mu.Lock() // Exclusive lock

defer c.mu.Unlock() // Guaranteed unlock

c.value[key]++

}

// Value returns the current value

func (c *SafeCounter) Value(key string) int {

c.mu.Lock()

defer c.mu.Unlock()

return c.value[key]

}

func main() {

counter := SafeCounter{value: make(map[string]int)}

var wg sync.WaitGroup

// 1000 concurrent increments

for i := 0; i < 1000; i++ {

wg.Add(1)

go func() {

defer wg.Done()

counter.Increment("visits")

}()

}

wg.Wait()

fmt.Printf("Total: %d\n", counter.Value("visits")) // 1000

}The sync.RWMutex optimizes concurrent reads with RLock()/RUnlock() for read-only operations.

Common Mistakes and Solutions

Go concurrency presents classic pitfalls. Here are the most common errors and how to avoid them.

package main

import (

"fmt"

"sync"

)

func main() {

// ERROR: Loop variable capture

// All goroutines would print the same value

for i := 0; i < 3; i++ {

go func() {

fmt.Println(i) // Capture by reference - BUG

}()

}

// SOLUTION: Pass value as parameter

var wg sync.WaitGroup

for i := 0; i < 3; i++ {

wg.Add(1)

go func(n int) {

defer wg.Done()

fmt.Println(n) // Local copy - CORRECT

}(i)

}

wg.Wait()

// ERROR: Send on nil channel

var ch chan int

// ch <- 1 // Blocks forever

// SOLUTION: Always initialize with make

ch = make(chan int, 1)

ch <- 1

fmt.Println(<-ch)

// ERROR: Send on closed channel

done := make(chan bool)

close(done)

// done <- true // Panic!

// SOLUTION: Check before send or use sync.Once

select {

case done <- true:

fmt.Println("Sent")

default:

fmt.Println("Channel closed or full")

}

}Data race detection uses the -race flag during compilation or testing: go test -race ./....

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Conclusion

Mastering Go concurrency relies on a few key concepts that, when well understood, enable building highly performant applications.

Key takeaways:

✅ Goroutines are lightweight and cheap - creating thousands remains acceptable

✅ Channels synchronize and transfer data between goroutines

✅ The select statement handles multiple communications and timeouts

✅ The worker pool pattern limits concurrency and optimizes resources

✅ The context package standardizes cancellation and deadlines

✅ Mutexes protect shared data when channels are insufficient

✅ The -race flag detects data races during testing

The "Share memory by communicating" philosophy guides toward safer and more maintainable designs than traditional multithreading with locks.

Tags

Share

Related articles

Go Technical Interview: Goroutines, Channels and Concurrency

Go interview questions on goroutines, channels, and concurrency patterns. Code examples, common pitfalls, and expert-level answers to prepare for Go technical interviews in 2026.

Top 25 Go Interview Questions: Complete Developer Guide

Ace your Go interviews with the 25 most asked questions. Master goroutines, channels, interfaces, concurrency patterns with practical code examples.

Go: Basics for Java/Python Developers in 2026

Learn Go quickly by leveraging your Java or Python experience. Goroutines, channels, interfaces and essential patterns explained for a smooth transition.