Go Technical Interview: Goroutines, Channels and Concurrency

Go interview questions on goroutines, channels, and concurrency patterns. Code examples, common pitfalls, and expert-level answers to prepare for Go technical interviews in 2026.

Go interview questions on goroutines, channels, and concurrency consistently rank among the most challenging topics candidates face. Understanding these concepts at a deep level separates senior Go engineers from those still learning the language. This guide covers the exact questions interviewers ask in 2026, with production-grade code examples and the reasoning behind each answer.

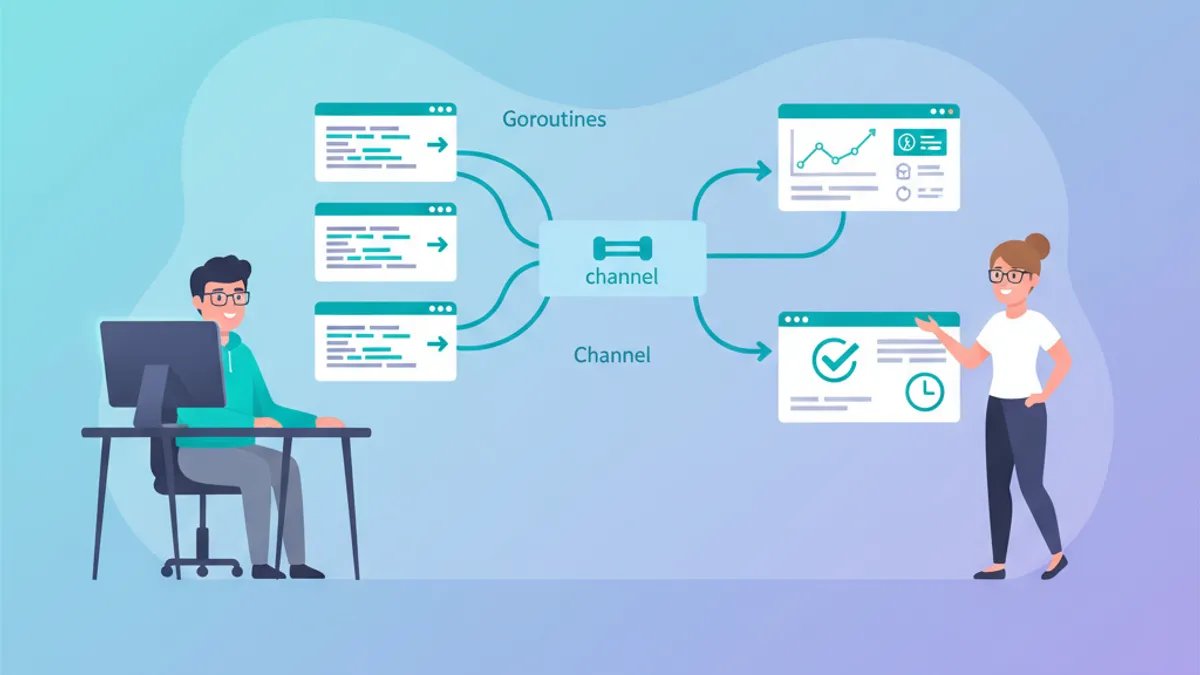

Go concurrency interviews focus on three areas: goroutine lifecycle management, channel semantics (buffered vs unbuffered, directional types), and pattern composition (fan-out/fan-in, worker pools, context cancellation). Memorizing syntax is insufficient — interviewers expect candidates to reason about race conditions and deadlocks.

Goroutine Fundamentals: What Every Interviewer Asks

The first round of questions typically probes whether a candidate understands what goroutines actually are — not just how to spawn them.

Q: What is a goroutine, and how does it differ from an OS thread?

A goroutine is a lightweight concurrent function managed by the Go runtime scheduler, not the operating system. The Go runtime multiplexes thousands of goroutines onto a small number of OS threads using an M:N scheduling model (M goroutines mapped to N OS threads).

package main

import (

"fmt"

"runtime"

"sync"

)

func main() {

// Print the number of OS threads available

fmt.Println("GOMAXPROCS:", runtime.GOMAXPROCS(0))

var wg sync.WaitGroup

for i := 0; i < 10000; i++ {

wg.Add(1)

go func(id int) {

defer wg.Done()

// Each goroutine starts with ~2-8KB stack

// OS threads typically start with 1-8MB

_ = id

}(i)

}

wg.Wait()

fmt.Println("All 10,000 goroutines completed")

}Key differences to mention in an interview: goroutines start with a 2-8KB stack that grows dynamically, versus the fixed 1-8MB stack of an OS thread. Context switching between goroutines is handled in user space by the Go scheduler, avoiding the expensive kernel-level context switches of OS threads. This makes spawning 100,000 goroutines practical, while 100,000 OS threads would exhaust system resources.

Q: What happens if a goroutine panics?

An unrecovered panic in any goroutine crashes the entire program. Unlike exceptions in Java or Python, a panic propagates up the goroutine's own call stack — not the stack of the goroutine that spawned it. The only way to catch it is with recover() inside a deferred function within the same goroutine.

package main

import "fmt"

func safeGo(fn func()) {

go func() {

defer func() {

if r := recover(); r != nil {

fmt.Println("recovered from panic:", r)

}

}()

fn() // execute the actual work

}()

}

func main() {

safeGo(func() {

panic("something went wrong")

})

// Give goroutine time to complete

select {}

}Interviewers look for awareness that production Go services wrap goroutine launches in a recovery pattern. Libraries like errgroup handle this more elegantly.

Channel Semantics: Buffered, Unbuffered, and Directional

Channel questions reveal whether a candidate truly understands Go's concurrency model or just memorizes patterns.

Q: What is the difference between a buffered and an unbuffered channel?

An unbuffered channel (make(chan T)) requires both sender and receiver to be ready simultaneously — the send blocks until another goroutine receives. A buffered channel (make(chan T, n)) allows up to n values to be sent without blocking.

package main

import "fmt"

func main() {

// Unbuffered: send blocks until receive is ready

ch := make(chan string)

go func() {

ch <- "hello" // blocks here until main reads

}()

msg := <-ch

fmt.Println(msg)

// Buffered: send does not block until buffer is full

buf := make(chan int, 3)

buf <- 1 // does not block (buffer has space)

buf <- 2 // does not block

buf <- 3 // does not block

// buf <- 4 would block — buffer is full

fmt.Println(<-buf, <-buf, <-buf) // 1 2 3

}The follow-up interviewers often ask: "When would you choose one over the other?" Unbuffered channels enforce synchronization — useful when the sender must know the receiver processed the value. Buffered channels decouple sender and receiver timing — useful for work queues or rate limiting where some slack is acceptable.

Q: What happens when you close a channel?

Closing a channel signals that no more values will be sent. Receives on a closed channel return immediately with the zero value. Sending on a closed channel causes a panic. A range loop over a channel exits when the channel is closed.

package main

import "fmt"

func producer(ch chan<- int, count int) {

for i := 0; i < count; i++ {

ch <- i

}

close(ch) // signal: no more values

}

func main() {

ch := make(chan int, 5)

go producer(ch, 5)

// range exits automatically when channel closes

for val := range ch {

fmt.Println("received:", val)

}

// Reading from closed channel returns zero value + false

val, ok := <-ch

fmt.Printf("after close: val=%d, ok=%v\n", val, ok)

}A critical point: only the sender should close a channel, never the receiver. Closing a channel that another goroutine is still writing to causes a panic.

Ready to ace your Go interviews?

Practice with our interactive simulators, flashcards, and technical tests.

The Select Statement: Multiplexing Channels

Q: How does select work, and what happens when multiple cases are ready?

The select statement blocks until one of its channel operations can proceed. When multiple cases are ready simultaneously, Go picks one at random — this prevents starvation of any particular case.

package main

import (

"context"

"fmt"

"time"

)

func fetchFromAPI(ctx context.Context, url string) (string, error) {

resultCh := make(chan string, 1)

errCh := make(chan error, 1)

go func() {

// Simulate API call

time.Sleep(200 * time.Millisecond)

resultCh <- fmt.Sprintf("data from %s", url)

}()

select {

case result := <-resultCh:

return result, nil

case err := <-errCh:

return "", err

case <-ctx.Done():

// Context cancelled or timed out

return "", ctx.Err()

}

}

func main() {

ctx, cancel := context.WithTimeout(context.Background(), 500*time.Millisecond)

defer cancel()

result, err := fetchFromAPI(ctx, "https://api.example.com/data")

if err != nil {

fmt.Println("error:", err)

return

}

fmt.Println(result)

}Interviewers test two things with select: understanding of the random selection rule, and the ability to combine channels with context.Context for timeout and cancellation patterns.

Common Concurrency Patterns Asked in Interviews

Senior-level Go interviews almost always include a question about implementing one of these patterns from scratch.

Fan-Out/Fan-In Pattern

Q: Implement a fan-out/fan-in pipeline that processes items concurrently.

Fan-out distributes work across multiple goroutines. Fan-in collects results from multiple goroutines into a single channel.

package main

import (

"fmt"

"sync"

)

// generator produces values on a channel

func generator(nums ...int) <-chan int {

out := make(chan int)

go func() {

for _, n := range nums {

out <- n

}

close(out)

}()

return out

}

// square reads from input, squares each value

func square(in <-chan int) <-chan int {

out := make(chan int)

go func() {

for n := range in {

out <- n * n

}

close(out)

}()

return out

}

// fanIn merges multiple channels into one

func fanIn(channels ...<-chan int) <-chan int {

var wg sync.WaitGroup

merged := make(chan int)

for _, ch := range channels {

wg.Add(1)

go func(c <-chan int) {

defer wg.Done()

for val := range c {

merged <- val

}

}(ch)

}

go func() {

wg.Wait()

close(merged) // close after all inputs are drained

}()

return merged

}

func main() {

in := generator(2, 3, 4, 5, 6)

// Fan out: two goroutines reading from same channel

c1 := square(in)

c2 := square(in)

// Fan in: merge results

for result := range fanIn(c1, c2) {

fmt.Println(result)

}

}The key insight interviewers want: the generator channel is shared between c1 and c2, so each value is processed by exactly one worker (not duplicated). The fanIn function uses a WaitGroup to know when all input channels are drained before closing the merged channel.

Worker Pool with errgroup

Q: How would you implement a bounded worker pool with error handling?

The golang.org/x/sync/errgroup package (part of the Go extended standard library) solves this cleanly. It manages goroutine lifecycles, collects the first error, and integrates with context for cancellation.

package main

import (

"context"

"fmt"

"golang.org/x/sync/errgroup"

)

func processItem(ctx context.Context, id int) error {

// Check for cancellation before heavy work

select {

case <-ctx.Done():

return ctx.Err()

default:

}

if id == 7 {

return fmt.Errorf("failed to process item %d", id)

}

fmt.Printf("processed item %d\n", id)

return nil

}

func main() {

g, ctx := errgroup.WithContext(context.Background())

g.SetLimit(3) // maximum 3 concurrent goroutines

for i := 0; i < 10; i++ {

id := i

g.Go(func() error {

return processItem(ctx, id)

})

}

// Wait blocks until all goroutines finish

// Returns the first non-nil error

if err := g.Wait(); err != nil {

fmt.Println("pipeline error:", err)

}

}Since Go 1.24 (the current stable release as of early 2026), this pattern remains the recommended approach. The SetLimit method was added in Go 1.20 and avoids the need to manually implement semaphore-based concurrency limiting.

Race Conditions and sync Primitives

Q: How do you detect and prevent race conditions in Go?

Go provides a built-in race detector activated with the -race flag. It detects unsynchronized concurrent access to shared memory at runtime.

package main

import (

"fmt"

"sync"

"sync/atomic"

)

// BAD: race condition — do not use in production

func unsafeCounter() int {

counter := 0

var wg sync.WaitGroup

for i := 0; i < 1000; i++ {

wg.Add(1)

go func() {

defer wg.Done()

counter++ // DATA RACE: concurrent read/write

}()

}

wg.Wait()

return counter // result is non-deterministic

}

// GOOD: atomic operations for simple counters

func atomicCounter() int64 {

var counter atomic.Int64

var wg sync.WaitGroup

for i := 0; i < 1000; i++ {

wg.Add(1)

go func() {

defer wg.Done()

counter.Add(1) // thread-safe atomic increment

}()

}

wg.Wait()

return counter.Load() // always 1000

}

// GOOD: mutex for complex shared state

type SafeMap struct {

mu sync.RWMutex

data map[string]int

}

func (m *SafeMap) Set(key string, val int) {

m.mu.Lock() // exclusive lock for writes

defer m.mu.Unlock()

m.data[key] = val

}

func (m *SafeMap) Get(key string) (int, bool) {

m.mu.RLock() // shared lock for reads

defer m.mu.RUnlock()

v, ok := m.data[key]

return v, ok

}

func main() {

fmt.Println("unsafe:", unsafeCounter()) // unpredictable

fmt.Println("atomic:", atomicCounter()) // always 1000

}The interview answer should cover three synchronization strategies: sync.Mutex / sync.RWMutex for complex shared state, sync/atomic for simple counters and flags, and channels for communicating between goroutines ("share memory by communicating, don't communicate by sharing memory"). Running go test -race ./... should be part of every CI pipeline.

Context and Cancellation Patterns

Q: Explain how context.Context controls goroutine lifecycles.

The context package provides a mechanism to propagate cancellation signals, deadlines, and request-scoped values across goroutine boundaries. Every long-running goroutine should accept a context.Context as its first parameter.

package main

import (

"context"

"fmt"

"time"

)

// worker simulates a long-running task

func worker(ctx context.Context, id int, results chan<- string) {

select {

case <-time.After(time.Duration(id*100) * time.Millisecond):

results <- fmt.Sprintf("worker %d: done", id)

case <-ctx.Done():

results <- fmt.Sprintf("worker %d: cancelled (%v)", id, ctx.Err())

}

}

func main() {

// Parent context with 250ms deadline

ctx, cancel := context.WithTimeout(context.Background(), 250*time.Millisecond)

defer cancel()

results := make(chan string, 5)

// Launch 5 workers with increasing durations

for i := 1; i <= 5; i++ {

go worker(ctx, i, results)

}

// Collect all results

for i := 0; i < 5; i++ {

fmt.Println(<-results)

}

}Workers 1 and 2 complete within the 250ms deadline. Workers 3, 4, and 5 receive the cancellation signal via ctx.Done(). This pattern is fundamental to building resilient HTTP servers and microservices in Go — every request handler receives a context that propagates cancellation when the client disconnects.

Never store a context.Context in a struct field. The official Go documentation explicitly states: "Do not store Contexts inside a struct type; instead, pass a Context explicitly to each function that needs it." Interviewers test this to gauge whether a candidate follows Go conventions.

Deadlock Detection: Tricky Interview Questions

Q: Will this code deadlock? Why?

Deadlock questions are popular because they test the candidate's ability to reason about goroutine scheduling and channel operations.

package main

func main() {

ch := make(chan int)

ch <- 42 // DEADLOCK: unbuffered send with no receiver

// The main goroutine blocks here forever

// Go runtime detects this: "fatal error: all goroutines are asleep"

}The fix is straightforward: either make the channel buffered (make(chan int, 1)) or spawn a goroutine to receive before sending. The Go runtime detects deadlocks when all goroutines are blocked — but only when all goroutines are asleep. If even one goroutine is running (e.g., a background HTTP server), the runtime will not detect a partial deadlock.

The Go runtime only detects deadlocks when every goroutine in the program is blocked. In real applications with HTTP servers or background workers, leaked goroutines that are deadlocked will not trigger the runtime detector. Tools like pprof and goroutine dumps (runtime.Stack) are necessary to diagnose these issues in production.

Advanced Pattern: Rate-Limited Concurrent Processing

Q: How would you implement rate-limited concurrent API calls?

This question tests the ability to combine multiple concurrency primitives into a cohesive solution.

package main

import (

"context"

"fmt"

"sync"

"time"

)

// RateLimiter controls concurrent and temporal access

type RateLimiter struct {

semaphore chan struct{} // limits concurrency

ticker *time.Ticker // limits rate

}

func NewRateLimiter(maxConcurrent int, interval time.Duration) *RateLimiter {

return &RateLimiter{

semaphore: make(chan struct{}, maxConcurrent),

ticker: time.NewTicker(interval),

}

}

func (rl *RateLimiter) Execute(ctx context.Context, fn func() error) error {

// Wait for rate limit tick

select {

case <-rl.ticker.C:

case <-ctx.Done():

return ctx.Err()

}

// Acquire concurrency slot

select {

case rl.semaphore <- struct{}{}:

case <-ctx.Done():

return ctx.Err()

}

defer func() { <-rl.semaphore }() // release slot

return fn()

}

func main() {

rl := NewRateLimiter(3, 100*time.Millisecond)

ctx, cancel := context.WithTimeout(context.Background(), 2*time.Second)

defer cancel()

var wg sync.WaitGroup

for i := 0; i < 10; i++ {

wg.Add(1)

go func(id int) {

defer wg.Done()

err := rl.Execute(ctx, func() error {

fmt.Printf("[%v] processing %d\n", time.Now().Format("04:05.000"), id)

time.Sleep(150 * time.Millisecond) // simulate work

return nil

})

if err != nil {

fmt.Printf("item %d: %v\n", id, err)

}

}(i)

}

wg.Wait()

}This pattern combines a channel-based semaphore (for concurrency limiting) with a ticker (for rate limiting). The double select with context checking ensures graceful shutdown. This is the kind of production-ready answer that distinguishes senior candidates.

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Conclusion

- Goroutines are user-space threads managed by the Go runtime with M:N scheduling; always recover panics in spawned goroutines

- Unbuffered channels synchronize sender and receiver; buffered channels decouple timing — choose based on whether the sender needs confirmation

- The

selectstatement multiplexes channel operations with random selection when multiple are ready; combine withcontext.Contextfor timeouts - Fan-out/fan-in and worker pools (via

errgroup.SetLimit) are the two most frequently asked concurrency patterns - Use

sync.Mutexfor complex shared state,sync/atomicfor simple counters, and channels for goroutine communication - Always run

go test -racein CI to catch data races; partial deadlocks requirepprofto diagnose - Never store

context.Contextin structs — pass it as the first function parameter - Rate limiting in Go combines channel semaphores with tickers, wrapped in context-aware select statements

Start practicing!

Test your knowledge with our interview simulators and technical tests.

Tags

Share

Related articles

Go Concurrency: Goroutines and Channels - Complete Guide

Master Go concurrency with goroutines and channels. Advanced patterns, synchronization, select statements, and best practices with detailed code examples.

Top 25 Go Interview Questions: Complete Developer Guide

Ace your Go interviews with the 25 most asked questions. Master goroutines, channels, interfaces, concurrency patterns with practical code examples.

Go: Basics for Java/Python Developers in 2026

Learn Go quickly by leveraging your Java or Python experience. Goroutines, channels, interfaces and essential patterns explained for a smooth transition.